Sufficient Statistic Memory AMP

Paper and Code

Jan 07, 2022

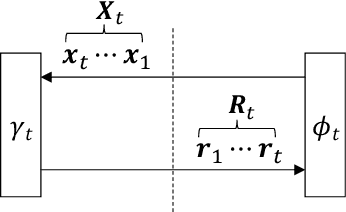

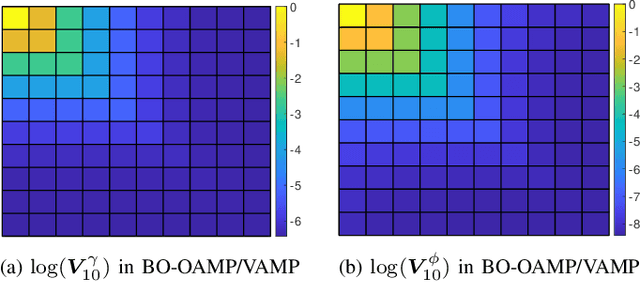

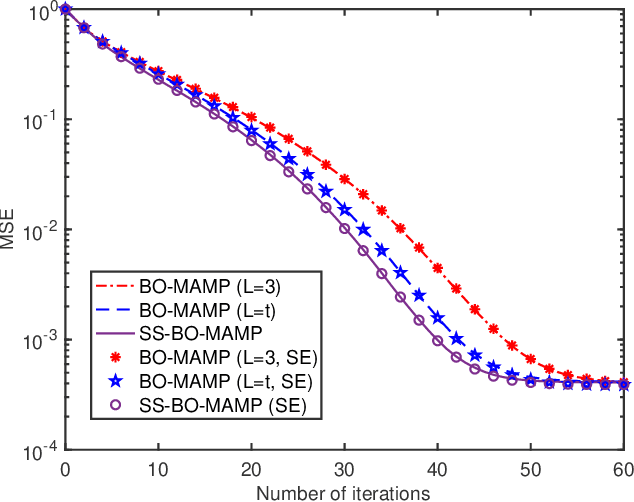

Approximate message passing (AMP) is a promising technique for unknown signal reconstruction of certain high-dimensional linear systems with non-Gaussian signaling. A distinguished feature of the AMP-type algorithms is that their dynamics can be rigorously described by state evolution. However, state evolution does not necessarily guarantee the convergence of iterative algorithms. To solve the convergence problem of AMP-type algorithms in principle, this paper proposes a memory AMP (MAMP) under a sufficient statistic condition, named sufficient statistic MAMP (SS-MAMP). We show that the covariance matrices of SS-MAMP are L-banded and convergent. Given an arbitrary MAMP, we can construct an SS-MAMP by damping, which not only ensures the convergence of MAMP but also preserves the orthogonality of MAMP, i.e., its dynamics can be rigorously described by state evolution. As a byproduct, we prove that the Bayes-optimal orthogonal/vector AMP (BO-OAMP/VAMP) is an SS-MAMP. As a result, we reveal two interesting properties of BO-OAMP/VAMP for large systems: 1) the covariance matrices are L-banded and are convergent, and 2) damping and memory are useless (i.e., do not bring performance improvement). As an example, we construct a sufficient statistic Bayes-optimal MAMP (SS-BO-MAMP), which is Bayes optimal if its state evolution has a unique fixed point. In addition, the mean square error (MSE) of SS-BO-MAMP is not worse than the original BO-MAMP. Finally, simulations are provided to verify the validity and accuracy of the theoretical results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge