Stochastic Subsampling With Average Pooling

Paper and Code

Sep 25, 2024

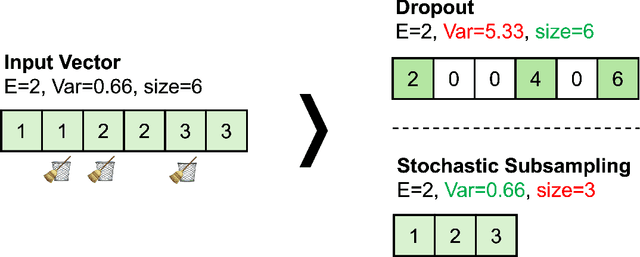

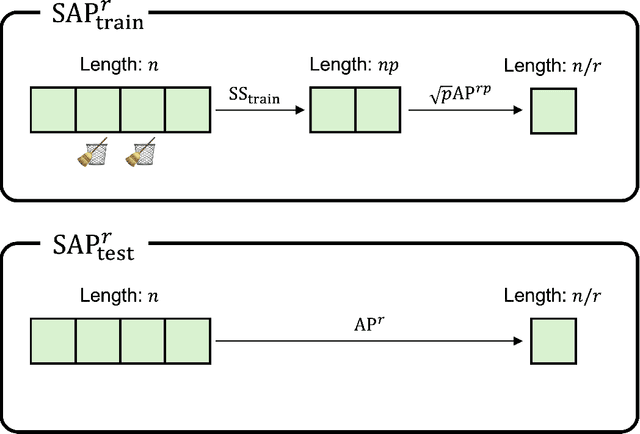

Regularization of deep neural networks has been an important issue to achieve higher generalization performance without overfitting problems. Although the popular method of Dropout provides a regularization effect, it causes inconsistent properties in the output, which may degrade the performance of deep neural networks. In this study, we propose a new module called stochastic average pooling, which incorporates Dropout-like stochasticity in pooling. We describe the properties of stochastic subsampling and average pooling and leverage them to design a module without any inconsistency problem. The stochastic average pooling achieves a regularization effect without any potential performance degradation due to the inconsistency issue and can easily be plugged into existing architectures of deep neural networks. Experiments demonstrate that replacing existing average pooling with stochastic average pooling yields consistent improvements across a variety of tasks, datasets, and models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge