Sparse Polynomial Optimization: Theory and Practice

Paper and Code

Aug 25, 2022

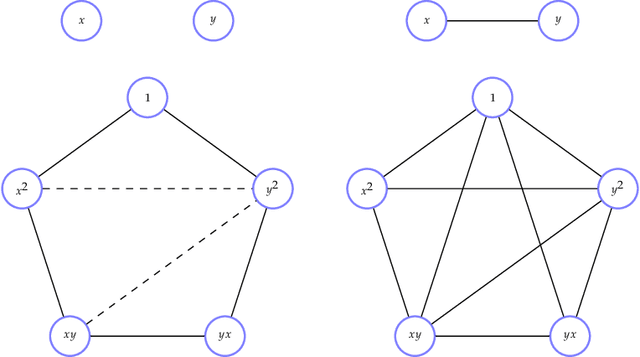

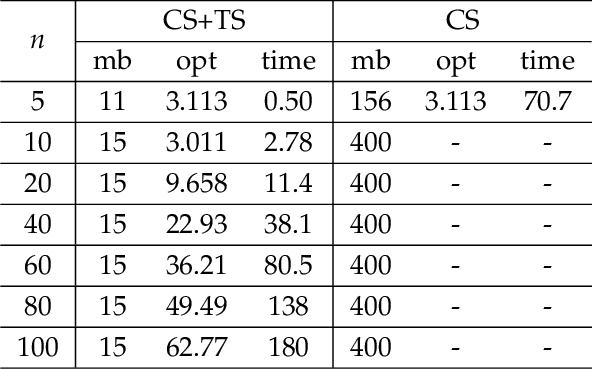

The problem of minimizing a polynomial over a set of polynomial inequalities is an NP-hard non-convex problem. Thanks to powerful results from real algebraic geometry, one can convert this problem into a nested sequence of finite-dimensional convex problems. At each step of the associated hierarchy, one needs to solve a fixed size semidefinite program, which can be in turn solved with efficient numerical tools. On the practical side however, there is no-free lunch and such optimization methods usually encompass severe scalability issues. Fortunately, for many applications, we can look at the problem in the eyes and exploit the inherent data structure arising from the cost and constraints describing the problem, for instance sparsity or symmetries. This book presents several research efforts to tackle this scientific challenge with important computational implications, and provides the development of alternative optimization schemes that scale well in terms of computational complexity, at least in some identified class of problems. The presented algorithmic framework in this book mainly exploits the sparsity structure of the input data to solve large-scale polynomial optimization problems. We present sparsity-exploiting hierarchies of relaxations, for either unconstrained or constrained problems. By contrast with the dense hierarchies, they provide faster approximation of the solution in practice but also come with the same theoretical convergence guarantees. Our framework is not restricted to static polynomial optimization, and we expose hierarchies of approximations for values of interest arising from the analysis of dynamical systems. We also present various extensions to problems involving noncommuting variables, e.g., matrices of arbitrary size or quantum physic operators.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge