Some thoughts on catastrophic forgetting and how to learn an algorithm

Paper and Code

Aug 20, 2021

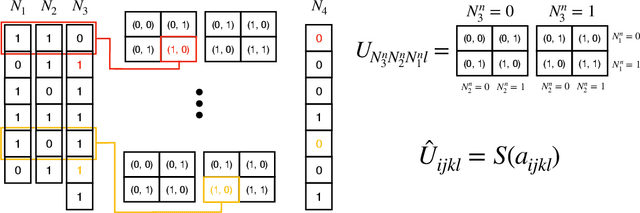

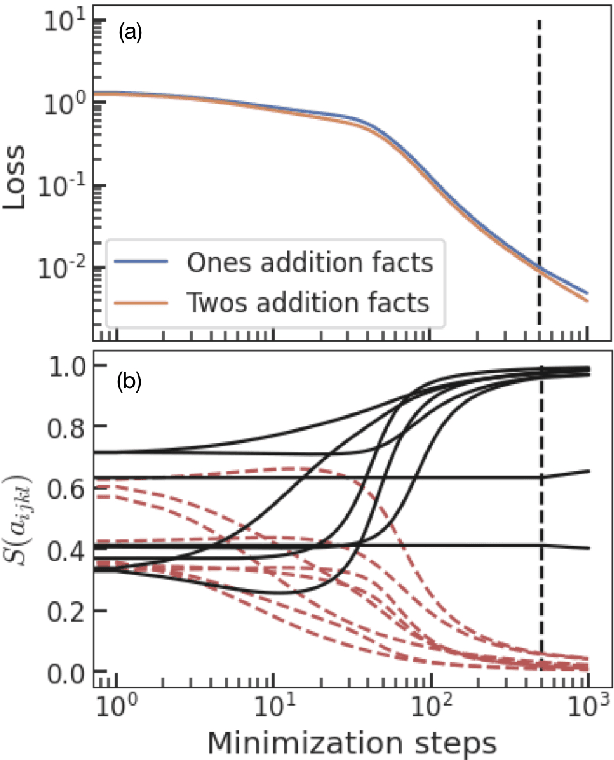

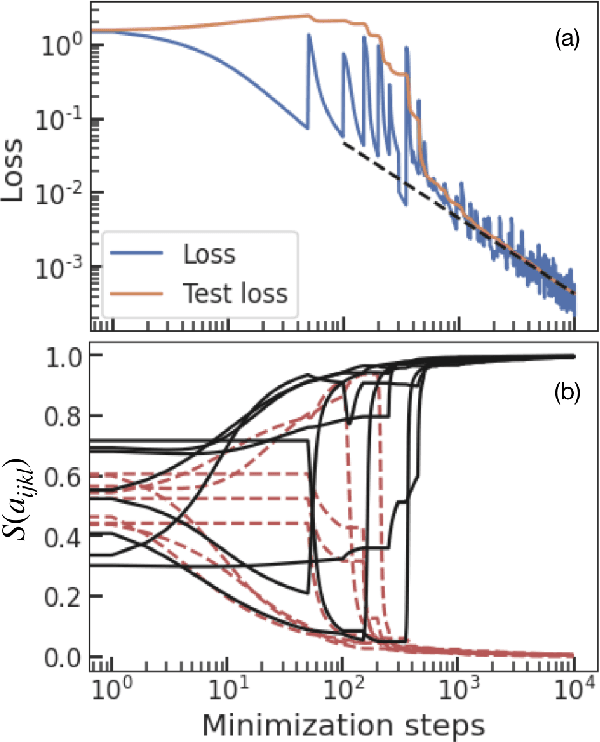

The work of McCloskey and Cohen popularized the concept of catastrophic interference. They used a neural network that tried to learn addition using two groups of examples as two different tasks. In their case, learning the second task rapidly deteriorated the acquired knowledge about the previous one. This could be a symptom of a fundamental problem: addition is an algorithmic task that should not be learned through pattern recognition. We propose to use a neural network with a different architecture that can be trained to recover the correct algorithm for the addition of binary numbers. We test it in the setting proposed by McCloskey and Cohen and training on random examples one by one. The neural network not only does not suffer from catastrophic forgetting but it improves its predictive power on unseen pairs of numbers as training progresses. This work emphasizes the importance that neural network architecture has for the emergence of catastrophic forgetting and introduces a neural network that is able to learn an algorithm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge