Slicing the Gaussian Mixture Wasserstein Distance

Paper and Code

Apr 11, 2025

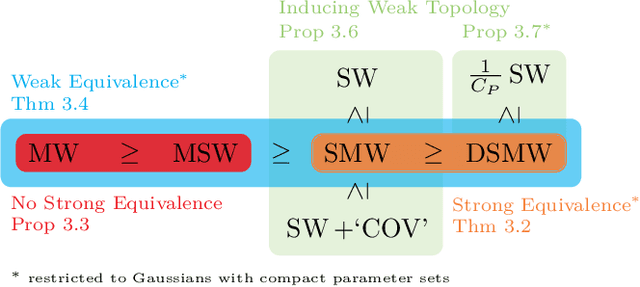

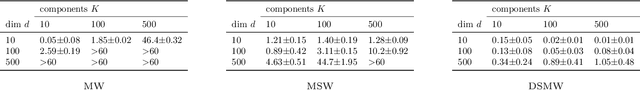

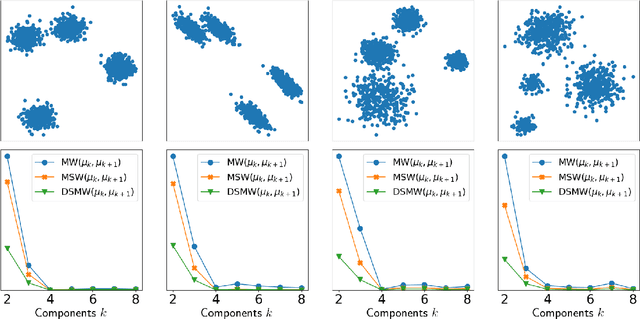

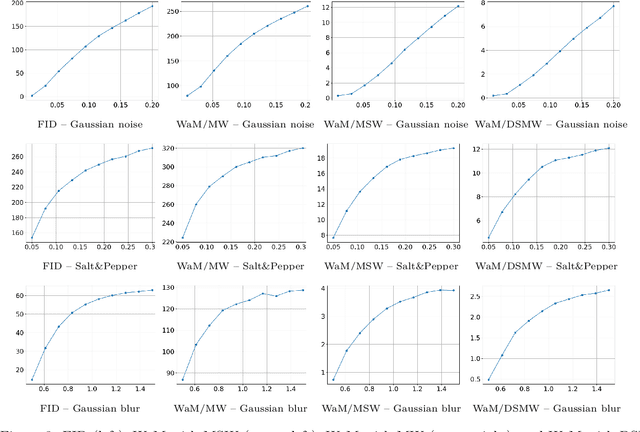

Gaussian mixture models (GMMs) are widely used in machine learning for tasks such as clustering, classification, image reconstruction, and generative modeling. A key challenge in working with GMMs is defining a computationally efficient and geometrically meaningful metric. The mixture Wasserstein (MW) distance adapts the Wasserstein metric to GMMs and has been applied in various domains, including domain adaptation, dataset comparison, and reinforcement learning. However, its high computational cost -- arising from repeated Wasserstein distance computations involving matrix square root estimations and an expensive linear program -- limits its scalability to high-dimensional and large-scale problems. To address this, we propose multiple novel slicing-based approximations to the MW distance that significantly reduce computational complexity while preserving key optimal transport properties. From a theoretical viewpoint, we establish several weak and strong equivalences between the introduced metrics, and show the relations to the original MW distance and the well-established sliced Wasserstein distance. Furthermore, we validate the effectiveness of our approach through numerical experiments, demonstrating computational efficiency and applications in clustering, perceptual image comparison, and GMM minimization

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge