Simpler is Better: off-the-shelf Continual Learning Through Pretrained Backbones

Paper and Code

May 03, 2022

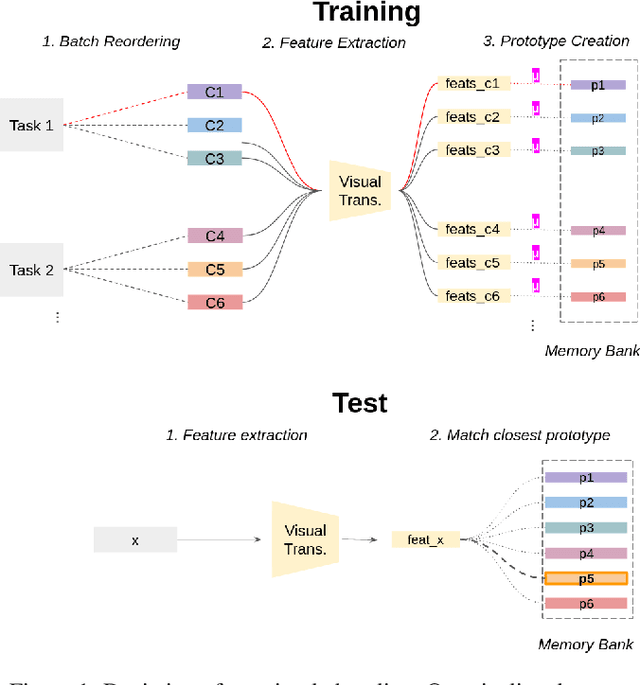

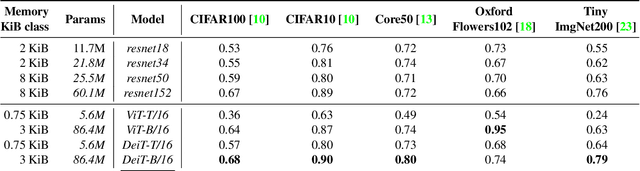

In this short paper, we propose a baseline (off-the-shelf) for Continual Learning of Computer Vision problems, by leveraging the power of pretrained models. By doing so, we devise a simple approach achieving strong performance for most of the common benchmarks. Our approach is fast since requires no parameters updates and has minimal memory requirements (order of KBytes). In particular, the "training" phase reorders data and exploit the power of pretrained models to compute a class prototype and fill a memory bank. At inference time we match the closest prototype through a knn-like approach, providing us the prediction. We will see how this naive solution can act as an off-the-shelf continual learning system. In order to better consolidate our results, we compare the devised pipeline with common CNN models and show the superiority of Vision Transformers, suggesting that such architectures have the ability to produce features of higher quality. Moreover, this simple pipeline, raises the same questions raised by previous works \cite{gdumb} on the effective progresses made by the CL community especially in the dataset considered and the usage of pretrained models. Code is live at https://github.com/francesco-p/off-the-shelf-cl

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge