Simple and Interpretable Probabilistic Classifiers for Knowledge Graphs

Paper and Code

Jul 09, 2024

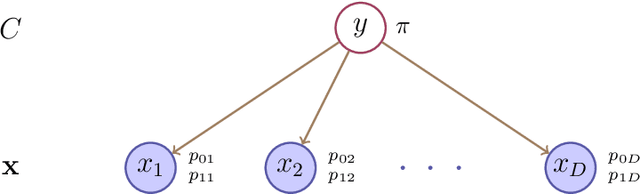

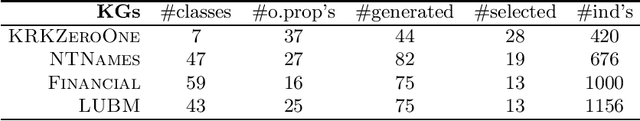

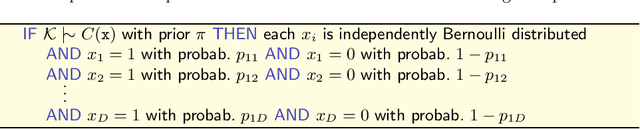

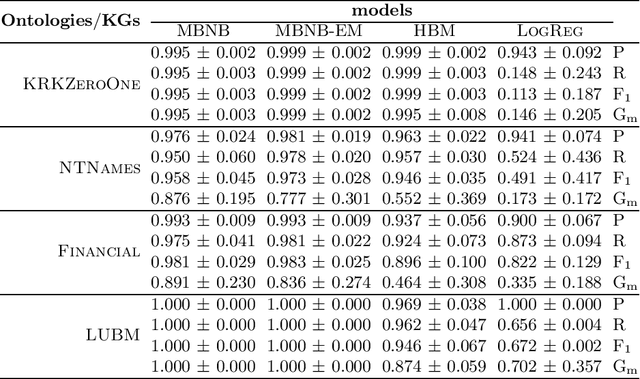

Tackling the problem of learning probabilistic classifiers from incomplete data in the context of Knowledge Graphs expressed in Description Logics, we describe an inductive approach based on learning simple belief networks. Specifically, we consider a basic probabilistic model, a Naive Bayes classifier, based on multivariate Bernoullis and its extension to a two-tier network in which this classification model is connected to a lower layer consisting of a mixture of Bernoullis. We show how such models can be converted into (probabilistic) axioms (or rules) thus ensuring more interpretability. Moreover they may be also initialized exploiting expert knowledge. We present and discuss the outcomes of an empirical evaluation which aimed at testing the effectiveness of the models on a number of random classification problems with different ontologies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge