Shallow Bayesian Meta Learning for Real-World Few-Shot Recognition

Paper and Code

Jan 08, 2021

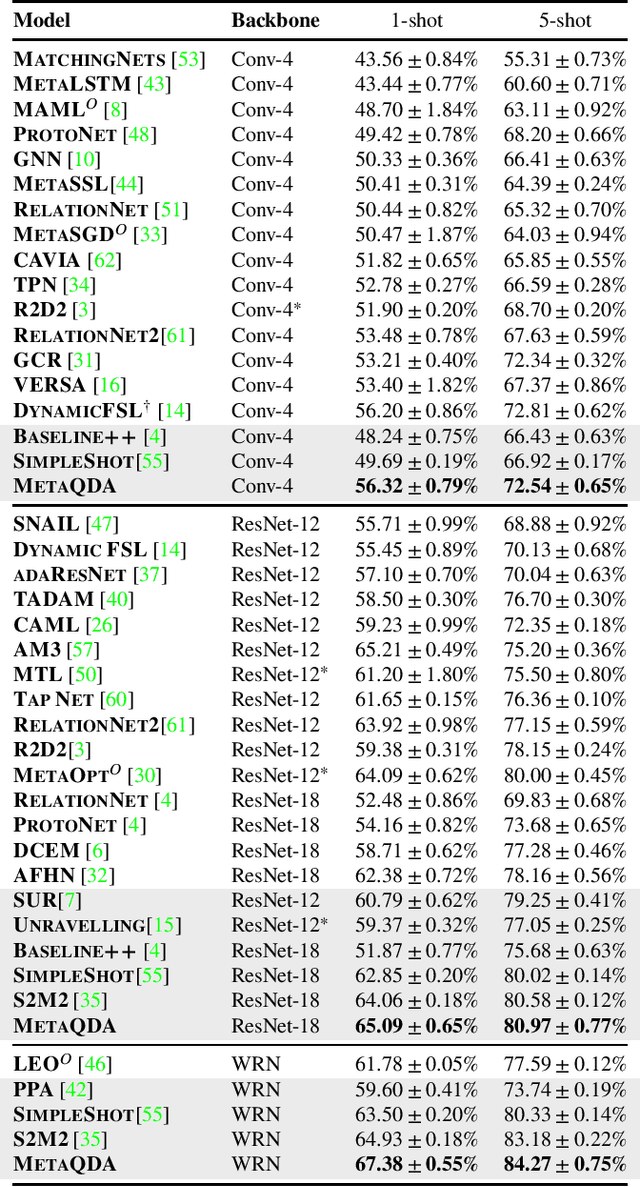

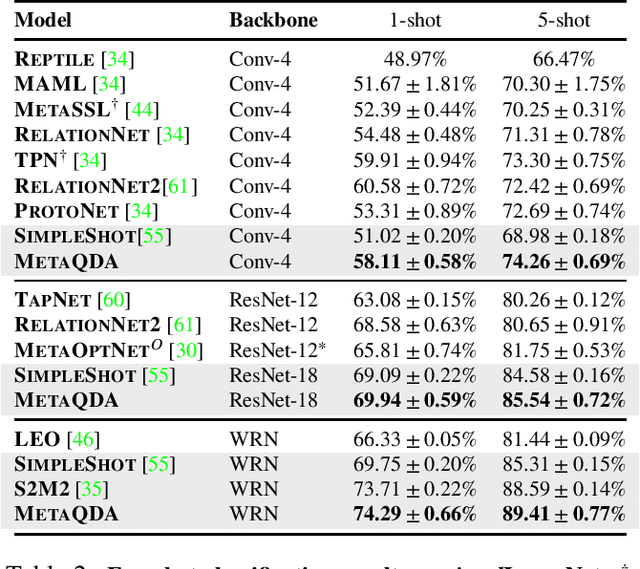

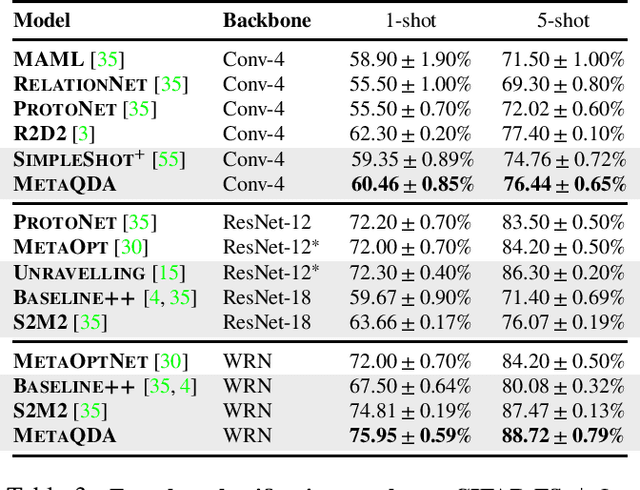

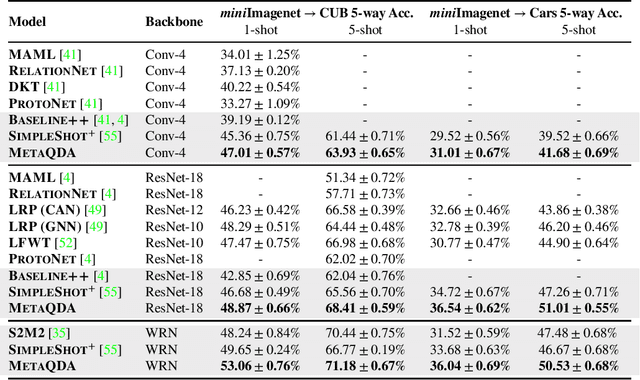

Current state-of-the-art few-shot learners focus on developing effective training procedures for feature representations, before using simple, e.g. nearest centroid, classifiers. In this paper we take an orthogonal approach that is agnostic to the features used, and focus exclusively on meta-learning the actual classifier layer. Specifically, we introduce MetaQDA, a Bayesian meta-learning generalisation of the classic quadratic discriminant analysis. This setup has several benefits of interest to practitioners: meta-learning is fast and memory efficient, without the need to fine-tune features. It is agnostic to the off-the-shelf features chosen, and thus will continue to benefit from advances in feature representations. Empirically, it leads to robust performance in cross-domain few-shot learning and, crucially for real-world applications, it leads to better uncertainty calibration in predictions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge