Sequence-to-Sequence Imputation of Missing Sensor Data

Paper and Code

Feb 25, 2020

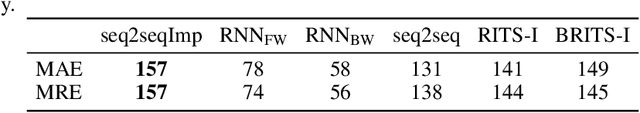

Although the sequence-to-sequence (encoder-decoder) model is considered the state-of-the-art in deep learning sequence models, there is little research into using this model for recovering missing sensor data. The key challenge is that the missing sensor data problem typically comprises three sequences (a sequence of observed samples, followed by a sequence of missing samples, followed by another sequence of observed samples) whereas, the sequence-to-sequence model only considers two sequences (an input sequence and an output sequence). We address this problem by formulating a sequence-to-sequence in a novel way. A forward RNN encodes the data observed before the missing sequence and a backward RNN encodes the data observed after the missing sequence. A decoder decodes the two encoders in a novel way to predict the missing data. We demonstrate that this model produces the lowest errors in 12% more cases than the current state-of-the-art.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge