Self-Adjusting Population Sizes for Non-Elitist Evolutionary Algorithms: Why Success Rates Matter

Paper and Code

Apr 12, 2021

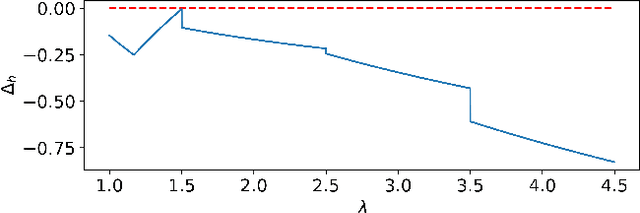

Recent theoretical studies have shown that self-adjusting mechanisms can provably outperform the best static parameters in evolutionary algorithms on discrete problems. However, the majority of these studies concerned elitist algorithms and we do not have a clear answer on whether the same mechanisms can be applied for non-elitist algorithms. We study one of the best-known parameter control mechanisms, the one-fifth success rule, to control the offspring population size $\lambda$ in the non-elitist ${(1 , \lambda)}$ EA. It is known that the ${(1 , \lambda)}$ EA has a sharp threshold with respect to the choice of $\lambda$ where the runtime on OneMax changes from polynomial to exponential time. Hence, it is not clear whether parameter control mechanisms are able to find and maintain suitable values of $\lambda$. We show that the answer crucially depends on the success rate $s$ (i.,e. a one-$(s+1)$-th success rule). We prove that, if the success rate is appropriately small, the self-adjusting ${(1 , \lambda)}$ EA optimises OneMax in $O(n)$ expected generations and $O(n \log n)$ expected evaluations. A small success rate is crucial: we also show that if the success rate is too large, the algorithm has an exponential runtime on OneMax.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge