SDNIA-YOLO: A Robust Object Detection Model for Extreme Weather Conditions

Paper and Code

Jun 18, 2024

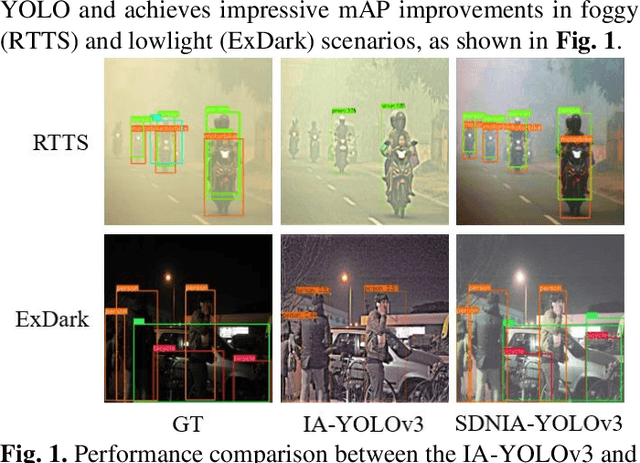

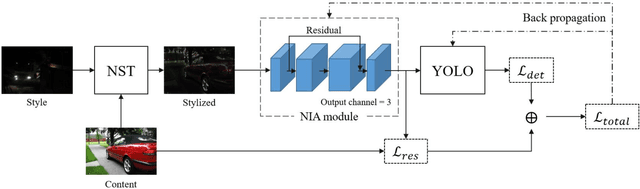

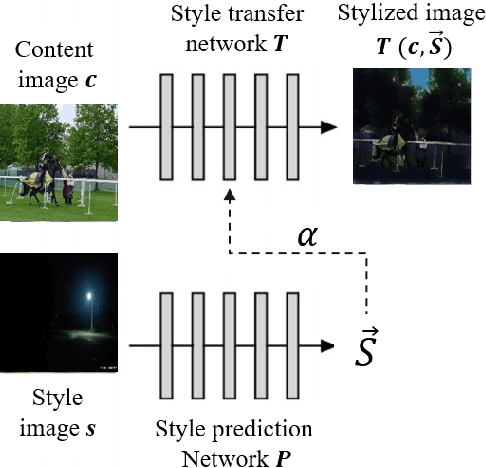

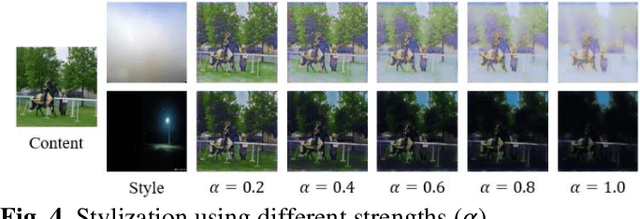

Though current object detection models based on deep learning have achieved excellent results on many conventional benchmark datasets, their performance will dramatically decline on real-world images taken under extreme conditions. Existing methods either used image augmentation based on traditional image processing algorithms or applied customized and scene-limited image adaptation technologies for robust modeling. This study thus proposes a stylization data-driven neural-image-adaptive YOLO (SDNIA-YOLO), which improves the model's robustness by enhancing image quality adaptively and learning valuable information related to extreme weather conditions from images synthesized by neural style transfer (NST). Experiments show that the developed SDNIA-YOLOv3 achieves significant mAP@.5 improvements of at least 15% on the real-world foggy (RTTS) and lowlight (ExDark) test sets compared with the baseline model. Besides, the experiments also highlight the outstanding potential of stylization data in simulating extreme weather conditions. The developed SDNIA-YOLO remains excellent characteristics of the native YOLO to a great extent, such as end-to-end one-stage, data-driven, and fast.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge