Scribble2D5: Weakly-Supervised Volumetric Image Segmentation via Scribble Annotations

Paper and Code

May 13, 2022

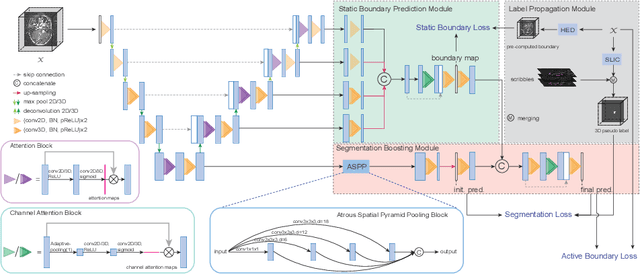

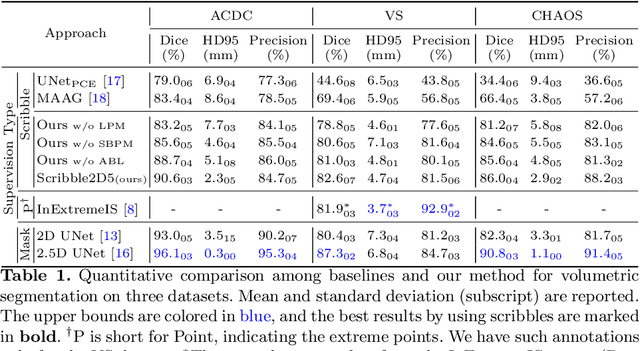

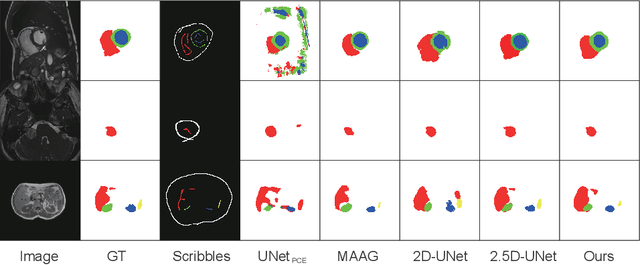

Recently, weakly-supervised image segmentation using weak annotations like scribbles has gained great attention, since such annotations are much easier to obtain compared to time-consuming and label-intensive labeling at the pixel/voxel level. However, because scribbles lack structure information of region of interest (ROI), existing scribble-based methods suffer from poor boundary localization. Furthermore, most current methods are designed for 2D image segmentation, which do not fully leverage the volumetric information if directly applied to image slices. In this paper, we propose a scribble-based volumetric image segmentation, Scribble2D5, which tackles 3D anisotropic image segmentation and improves boundary prediction. To achieve this, we augment a 2.5D attention UNet with a proposed label propagation module to extend semantic information from scribbles and a combination of static and active boundary prediction to learn ROI's boundary and regularize its shape. Extensive experiments on three public datasets demonstrate Scribble2D5 significantly outperforms current scribble-based methods and approaches the performance of fully-supervised ones. Our code is available online.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge