Scaling Configuration of Energy Harvesting Sensors with Reinforcement Learning

Paper and Code

Nov 27, 2018

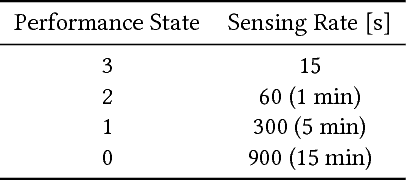

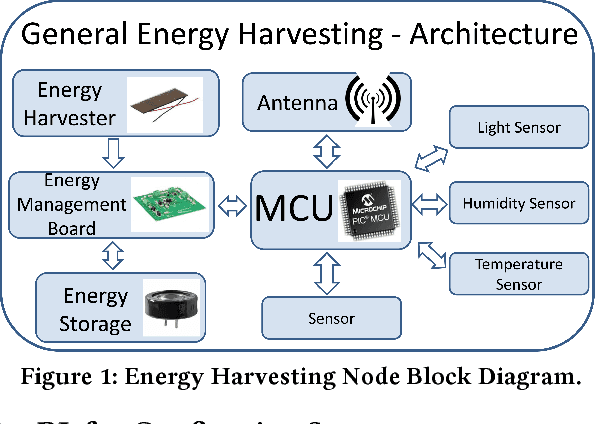

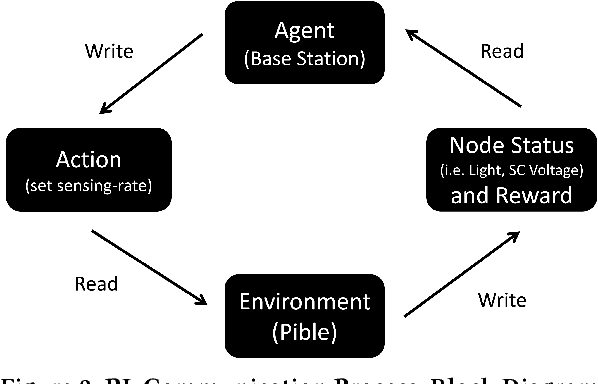

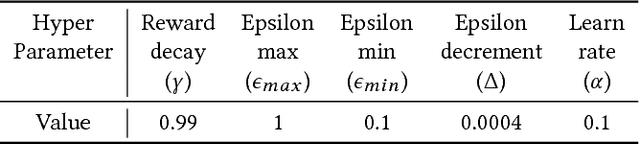

With the advent of the Internet of Things (IoT), an increasing number of energy harvesting methods are being used to supplement or supplant battery based sensors. Energy harvesting sensors need to be configured according to the application, hardware, and environmental conditions to maximize their usefulness. As of today, the configuration of sensors is either manual or heuristics based, requiring valuable domain expertise. Reinforcement learning (RL) is a promising approach to automate configuration and efficiently scale IoT deployments, but it is not yet adopted in practice. We propose solutions to bridge this gap: reduce the training phase of RL so that nodes are operational within a short time after deployment and reduce the computational requirements to scale to large deployments. We focus on configuration of the sampling rate of indoor solar panel based energy harvesting sensors. We created a simulator based on 3 months of data collected from 5 sensor nodes subject to different lighting conditions. Our simulation results show that RL can effectively learn energy availability patterns and configure the sampling rate of the sensor nodes to maximize the sensing data while ensuring that energy storage is not depleted. The nodes can be operational within the first day by using our methods. We show that it is possible to reduce the number of RL policies by using a single policy for nodes that share similar lighting conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge