Robustness Metrics for Real-World Adversarial Examples

Paper and Code

Nov 24, 2019

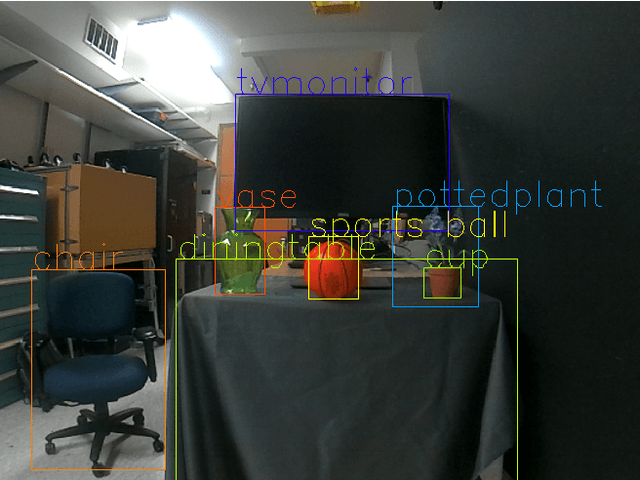

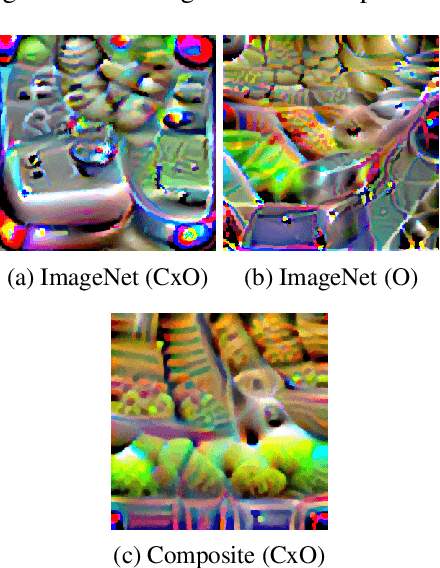

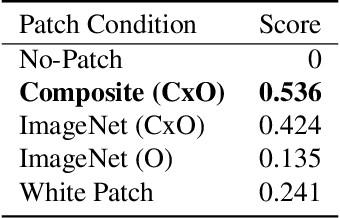

We explore metrics to evaluate the robustness of real-world adversarial attacks, in particular adversarial patches, to changes in environmental conditions. We demonstrate how these metrics can be used to establish model baseline performance and uncover model biases to then compare against real-world adversarial attacks. We establish a custom score for an adversarial condition that is adjusted for different environmental conditions and explore how the score changes with respect to specific environmental factors. Lastly, we propose two use cases for confidence distributions in each environmental condition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge