Robust Matrix Regression

Paper and Code

Nov 15, 2016

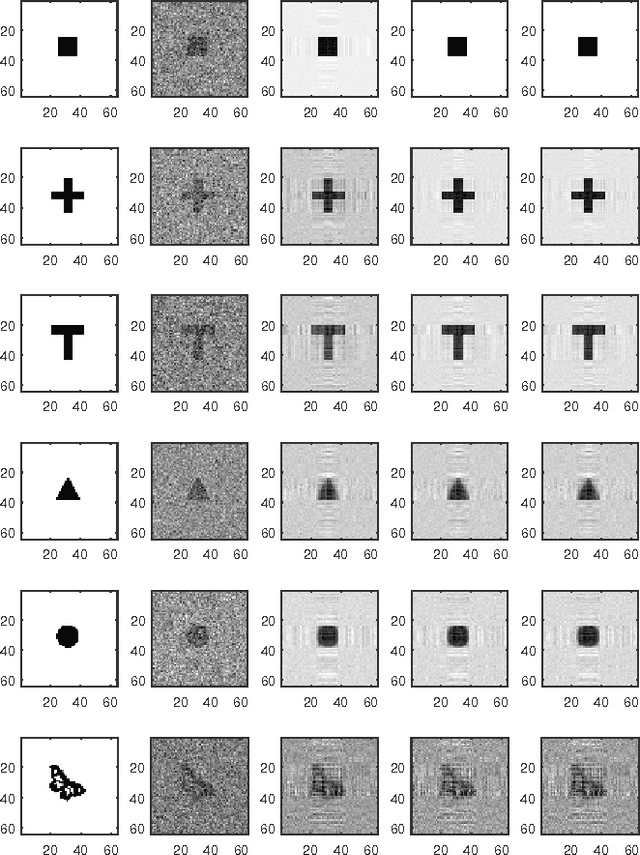

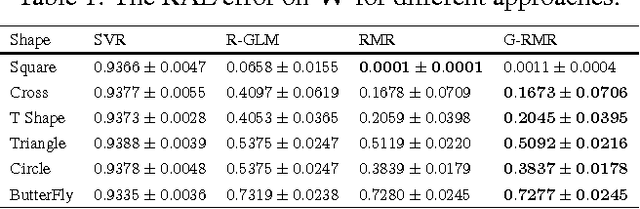

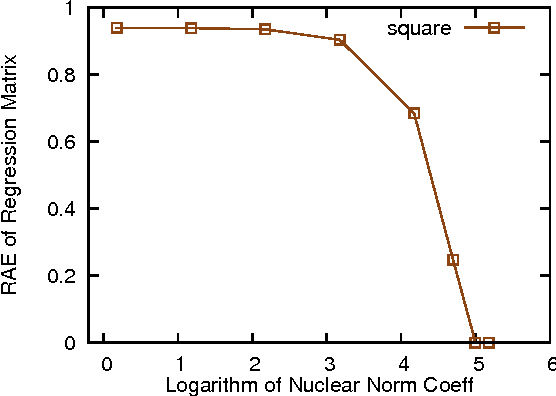

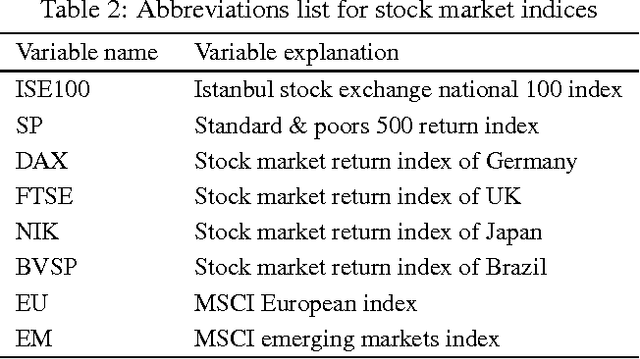

Modern technologies are producing datasets with complex intrinsic structures, and they can be naturally represented as matrices instead of vectors. To preserve the latent data structures during processing, modern regression approaches incorporate the low-rank property to the model and achieve satisfactory performance for certain applications. These approaches all assume that both predictors and labels for each pair of data within the training set are accurate. However, in real-world applications, it is common to see the training data contaminated by noises, which can affect the robustness of these matrix regression methods. In this paper, we address this issue by introducing a novel robust matrix regression method. We also derive efficient proximal algorithms for model training. To evaluate the performance of our methods, we apply it to real world applications with comparative studies. Our method achieves the state-of-the-art performance, which shows the effectiveness and the practical value of our method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge