Robust and Accurate Object Detection via Self-Knowledge Distillation

Paper and Code

Nov 14, 2021

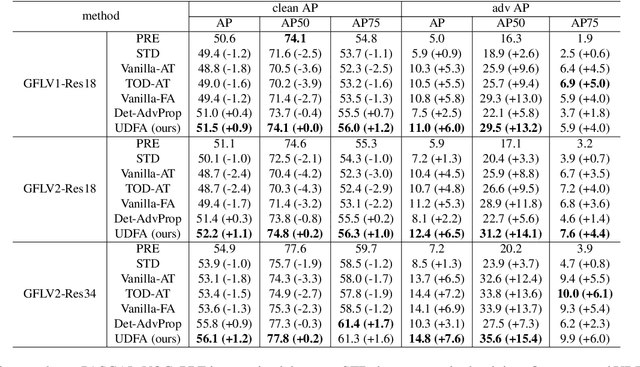

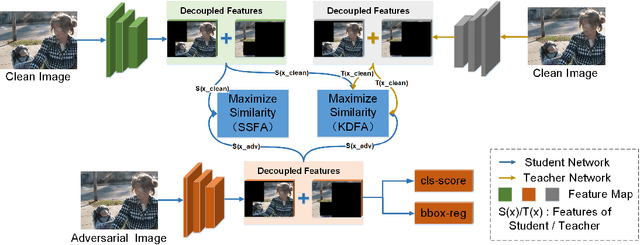

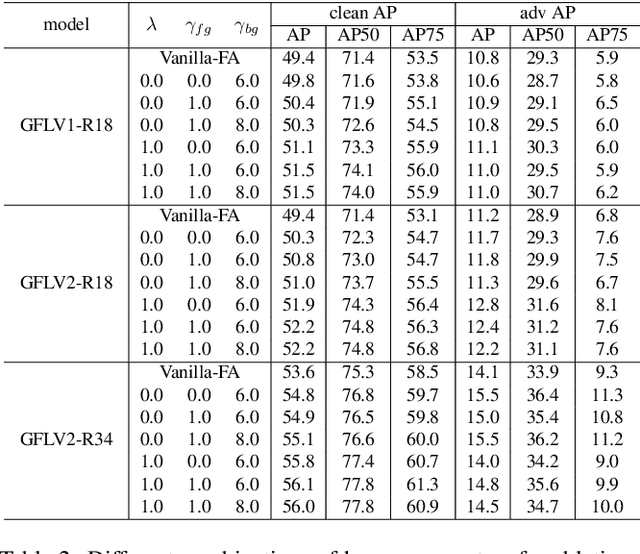

Object detection has achieved promising performance on clean datasets, but how to achieve better tradeoff between the adversarial robustness and clean precision is still under-explored. Adversarial training is the mainstream method to improve robustness, but most of the works will sacrifice clean precision to gain robustness than standard training. In this paper, we propose Unified Decoupled Feature Alignment (UDFA), a novel fine-tuning paradigm which achieves better performance than existing methods, by fully exploring the combination between self-knowledge distillation and adversarial training for object detection. We first use decoupled fore/back-ground features to construct self-knowledge distillation branch between clean feature representation from pretrained detector (served as teacher) and adversarial feature representation from student detector. Then we explore the self-knowledge distillation from a new angle by decoupling original branch into a self-supervised learning branch and a new self-knowledge distillation branch. With extensive experiments on the PASCAL-VOC and MS-COCO benchmarks, the evaluation results show that UDFA can surpass the standard training and state-of-the-art adversarial training methods for object detection. For example, compared with teacher detector, our approach on GFLV2 with ResNet-50 improves clean precision by 2.2 AP on PASCAL-VOC; compared with SOTA adversarial training methods, our approach improves clean precision by 1.6 AP, while improving adversarial robustness by 0.5 AP. Our code will be available at https://github.com/grispeut/udfa.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge