Risk-Aware High-level Decisions for Automated Driving at Occluded Intersections with Reinforcement Learning

Paper and Code

Apr 09, 2020

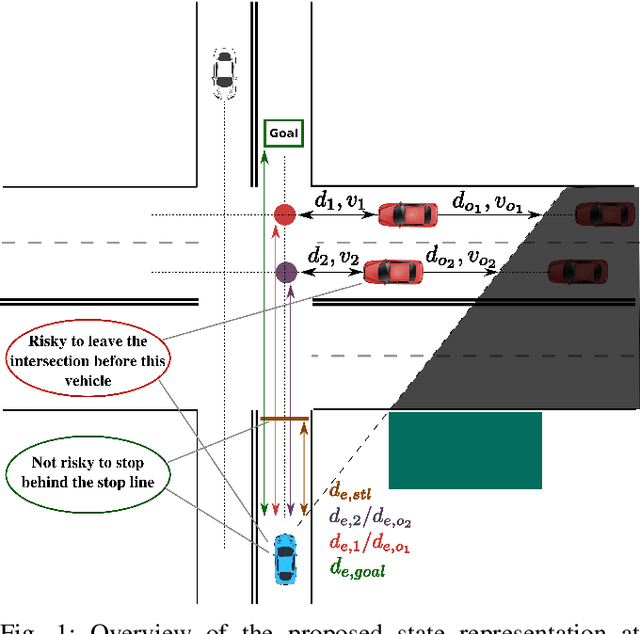

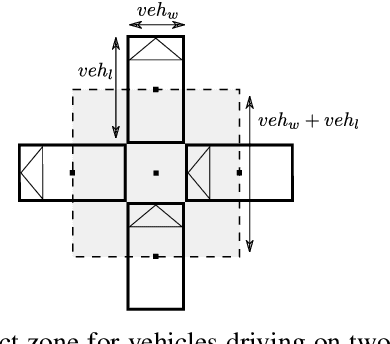

Reinforcement learning is nowadays a popular framework for solving different decision making problems in automated driving. However, there are still some remaining crucial challenges that need to be addressed for providing more reliable policies. In this paper, we propose a generic risk-aware DQN approach in order to learn high level actions for driving through unsignalized occluded intersections. The proposed state representation provides lane based information which allows to be used for multi-lane scenarios. Moreover, we propose a risk based reward function which punishes risky situations instead of only collision failures. Such rewarding approach helps to incorporate risk prediction into our deep Q network and learn more reliable policies which are safer in challenging situations. The efficiency of the proposed approach is compared with a DQN learned with conventional collision based rewarding scheme and also with a rule-based intersection navigation policy. Evaluation results show that the proposed approach outperforms both of these methods. It provides safer actions than collision-aware DQN approach and is less overcautious than the rule-based policy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge