Rethinking Machine Learning Development and Deployment for Edge Devices

Paper and Code

Jun 20, 2018

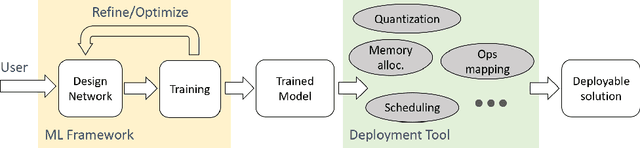

Machine learning (ML), especially deep learning is made possible by the availability of big data, enormous compute power and, often overlooked, development tools or frameworks. As the algorithms become mature and efficient, more and more ML inference is moving out of datacenters/cloud and deployed on edge devices. This model deployment process can be challenging as the deployment environment and requirements can be substantially different from those during model development. In this paper, we propose a new ML development and deployment approach that is specially designed and optimized for inference-only deployment on edge devices. We build a prototype and demonstrate that this approach can address all the deployment challenges and result in more efficient and high-quality solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge