Relational representation learning with spike trains

Paper and Code

May 18, 2022

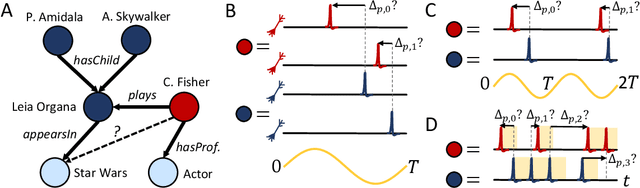

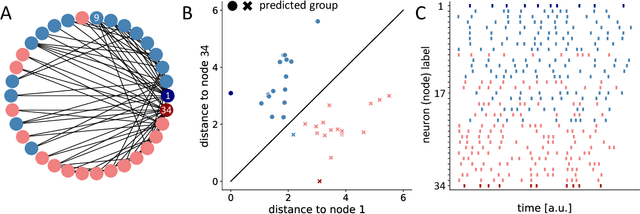

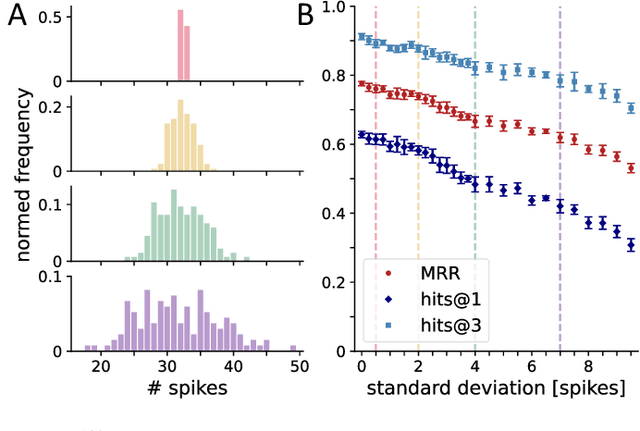

Relational representation learning has lately received an increase in interest due to its flexibility in modeling a variety of systems like interacting particles, materials and industrial projects for, e.g., the design of spacecraft. A prominent method for dealing with relational data are knowledge graph embedding algorithms, where entities and relations of a knowledge graph are mapped to a low-dimensional vector space while preserving its semantic structure. Recently, a graph embedding method has been proposed that maps graph elements to the temporal domain of spiking neural networks. However, it relies on encoding graph elements through populations of neurons that only spike once. Here, we present a model that allows us to learn spike train-based embeddings of knowledge graphs, requiring only one neuron per graph element by fully utilizing the temporal domain of spike patterns. This coding scheme can be implemented with arbitrary spiking neuron models as long as gradients with respect to spike times can be calculated, which we demonstrate for the integrate-and-fire neuron model. In general, the presented results show how relational knowledge can be integrated into spike-based systems, opening up the possibility of merging event-based computing and relational data to build powerful and energy efficient artificial intelligence applications and reasoning systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge