Reinforcement Learning with Attention that Works: A Self-Supervised Approach

Paper and Code

Apr 06, 2019

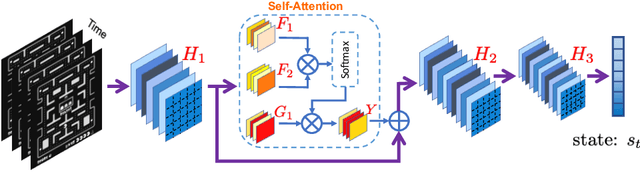

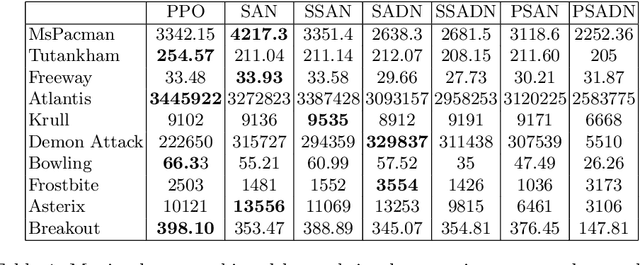

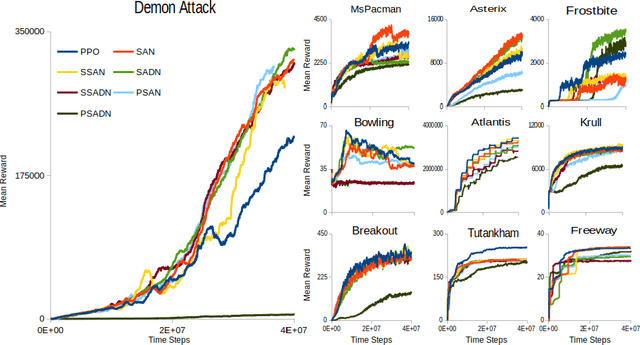

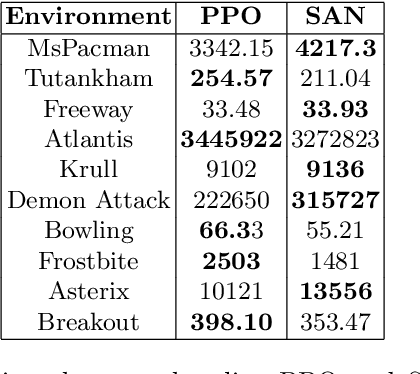

Attention models have had a significant positive impact on deep learning across a range of tasks. However previous attempts at integrating attention with reinforcement learning have failed to produce significant improvements. We propose the first combination of self attention and reinforcement learning that is capable of producing significant improvements, including new state of the art results in the Arcade Learning Environment. Unlike the selective attention models used in previous attempts, which constrain the attention via preconceived notions of importance, our implementation utilises the Markovian properties inherent in the state input. Our method produces a faithful visualisation of the policy, focusing on the behaviour of the agent. Our experiments demonstrate that the trained policies use multiple simultaneous foci of attention, and are able to modulate attention over time to deal with situations of partial observability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge