Recognizing Images with at most one Spike per Neuron

Paper and Code

Jan 21, 2020

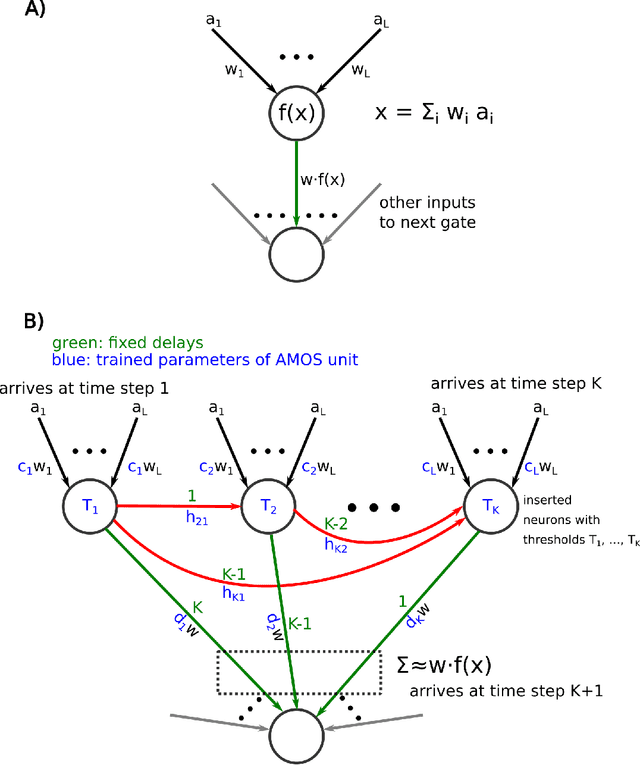

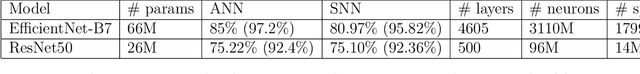

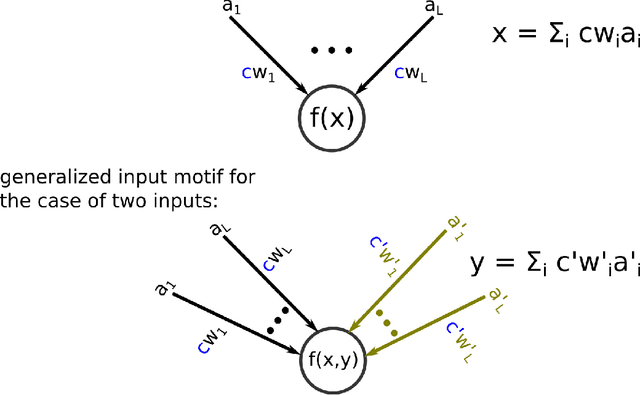

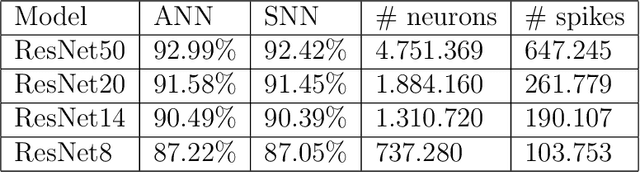

In order to port the performance of trained artificial neural networks (ANNs) to spiking neural networks (SNNs), which can be implemented in neuromorphic hardware with a drastically reduced energy consumption, an efficient ANN to SNN conversion is needed. Previous conversion schemes focused on the representation of the analog output of a rectified linear (ReLU) gate in the ANN by the firing rate of a spiking neuron. But this is not possible for other commonly used ANN gates, and it reduces the throughput even for ReLU gates. We introduce a new conversion method where a gate in the ANN, which can basically be of any type, is emulated by a small circuit of spiking neurons, with At Most One Spike (AMOS) per neuron. We show that this AMOS conversion improves the accuracy of SNNs for ImageNet from 74.60% to 80.97%, thereby bringing it within reach of the best available ANN accuracy (85.0%). The Top5 accuracy of SNNs is raised to 95.82%, getting even closer to the best Top5 performance of 97.2% for ANNs. In addition, AMOS conversion improves latency and throughput of spike-based image classification by several orders of magnitude. Hence these results suggest that SNNs provide a viable direction for developing highly energy efficient hardware for AI that combines high performance with versatility of applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge