Recognizing Facial Expressions in the Wild using Multi-Architectural Representations based Ensemble Learning with Distillation

Paper and Code

Jul 04, 2021

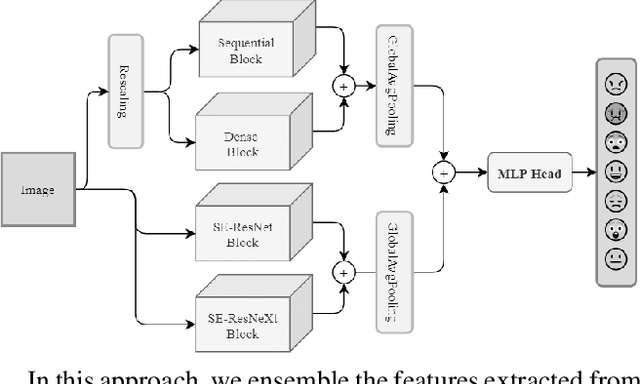

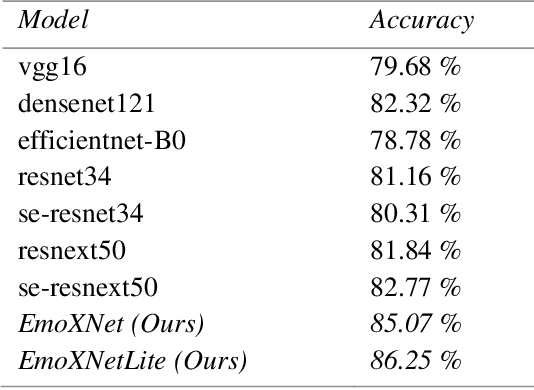

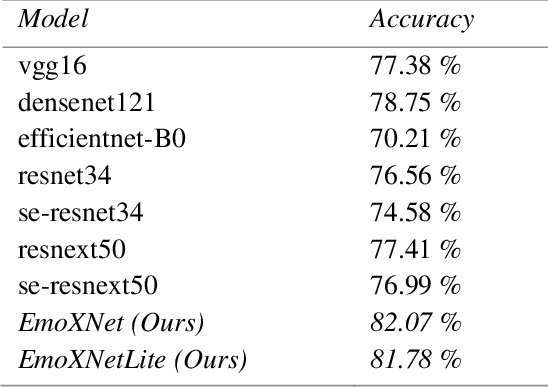

Facial expressions are the most common universal forms of body language. In the past few years, automatic facial expression recognition (FER) has been an active field of research. However, it is still a challenging task due to different uncertainties and complications. Nevertheless, efficiency and performance are yet essential aspects for building robust systems. In this work, we propose two models named EmoXNet and EmoXNetLite. EmoXNet is an ensemble learning technique for learning convoluted facial representations, whereas EmoXNetLite is a distillation technique for transferring the knowledge from our ensemble model to an efficient deep neural network using label-smoothen soft labels to detect expressions effectively in real-time. Both models attained better accuracy level in comparison to the models reported to date. The ensemble model (EmoXNet) attained 85.07% test accuracy on FER-2013 with FER+ annotations and 86.25% test accuracy on Real-world Affective Faces Database (RAF-DB). Whereas, the distilled model (EmoXNetLite) attained 82.07% test accuracy on FER-2013 with FER+ annotations and 81.78% test accuracy on RAF-DB. Results show that our models seem to generalize well on new data and are learned to focus on relevant facial representations for expressions recognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge