Recht-Ré Noncommutative Arithmetic-Geometric Mean Conjecture is False

Paper and Code

Jun 02, 2020

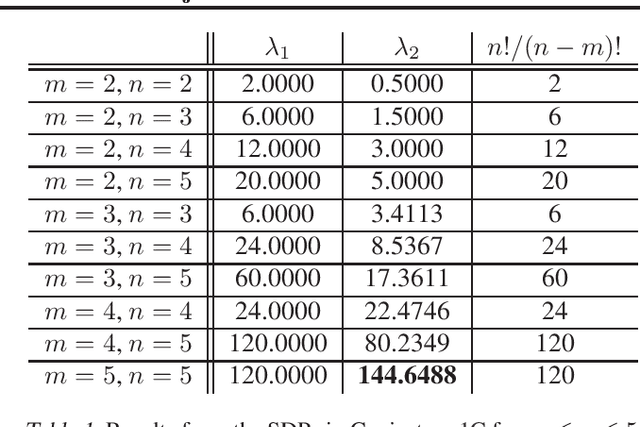

Stochastic optimization algorithms have become indispensable in modern machine learning. An unresolved foundational question in this area is the difference between with-replacement sampling and without-replacement sampling -- does the latter have superior convergence rate compared to the former? A groundbreaking result of Recht and R\'e reduces the problem to a noncommutative analogue of the arithmetic-geometric mean inequality where $n$ positive numbers are replaced by $n$ positive definite matrices. If this inequality holds for all $n$, then without-replacement sampling indeed outperforms with-replacement sampling. The conjectured Recht-R\'e inequality has so far only been established for $n = 2$ and a special case of $n = 3$. We will show that the Recht-R\'e conjecture is false for general $n$. Our approach relies on the noncommutative Positivstellensatz, which allows us to reduce the conjectured inequality to a semidefinite program and the validity of the conjecture to certain bounds for the optimum values, which we show are false as soon as $n = 5$.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge