Read, Tag, and Parse All at Once, or Fully-neural Dependency Parsing

Paper and Code

Jun 05, 2017

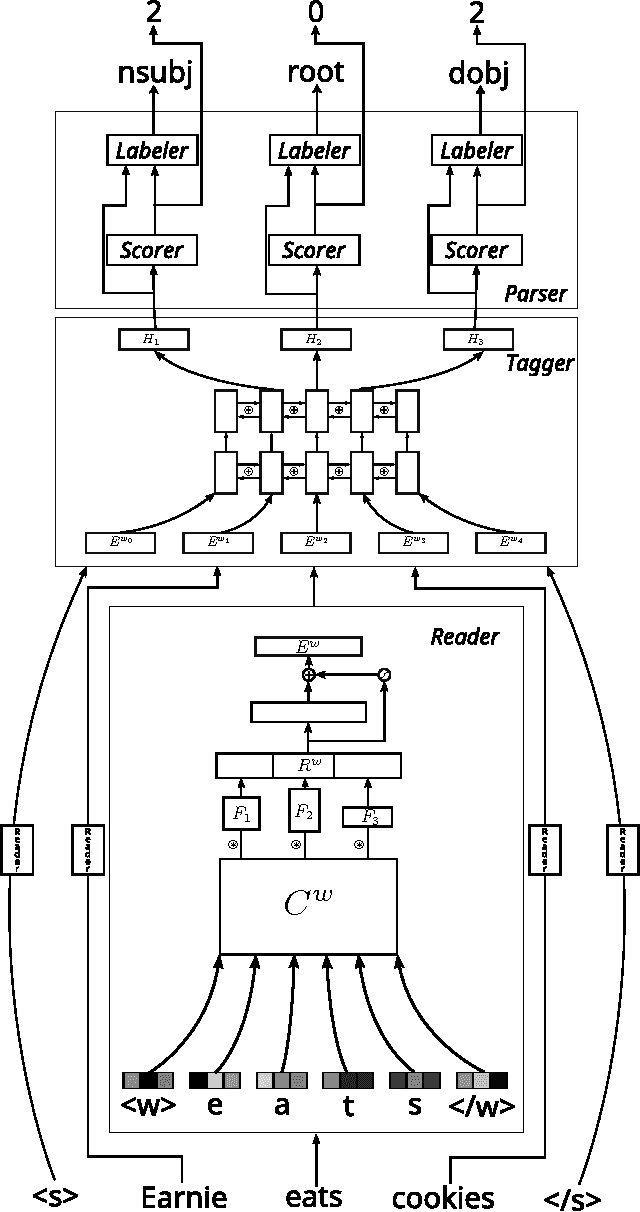

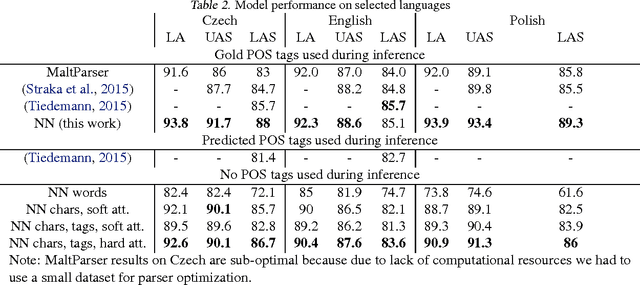

We present a dependency parser implemented as a single deep neural network that reads orthographic representations of words and directly generates dependencies and their labels. Unlike typical approaches to parsing, the model doesn't require part-of-speech (POS) tagging of the sentences. With proper regularization and additional supervision achieved with multitask learning we reach state-of-the-art performance on Slavic languages from the Universal Dependencies treebank: with no linguistic features other than characters, our parser is as accurate as a transition- based system trained on perfect POS tags.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge