RCDM: Enabling Robustness for Conditional Diffusion Model

Paper and Code

Aug 05, 2024

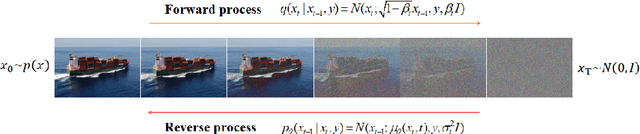

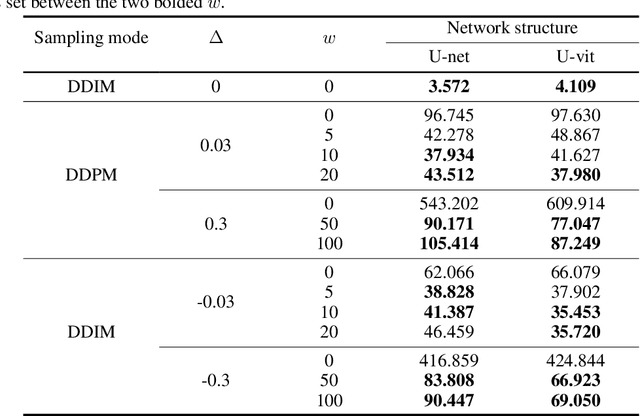

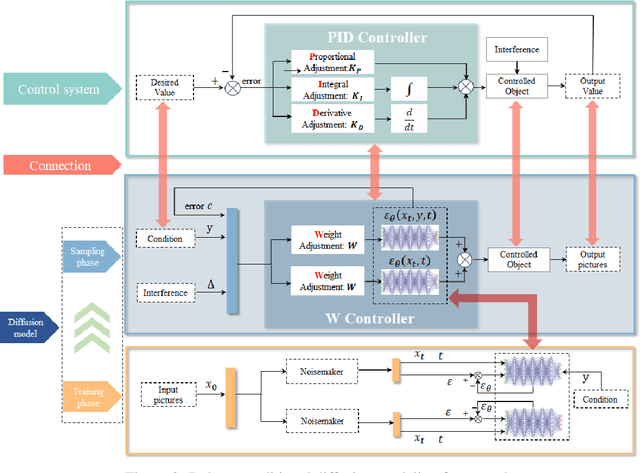

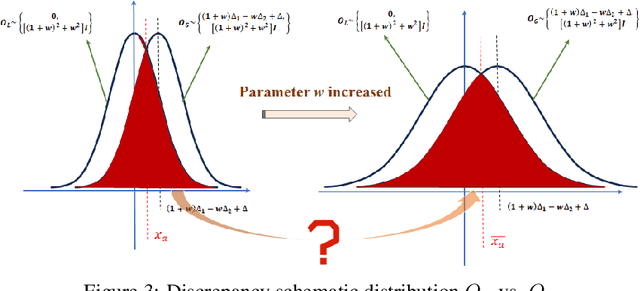

The conditional diffusion model (CDM) enhances the standard diffusion model by providing more control, improving the quality and relevance of the outputs, and making the model adaptable to a wider range of complex tasks. However, inaccurate conditional inputs in the inverse process of CDM can easily lead to generating fixed errors in the neural network, which diminishes the adaptability of a well-trained model. The existing methods like data augmentation, adversarial training, robust optimization can improve the robustness, while they often face challenges such as high computational complexity, limited applicability to unknown perturbations, and increased training difficulty. In this paper, we propose a lightweight solution, the Robust Conditional Diffusion Model (RCDM), based on control theory to dynamically reduce the impact of noise and significantly enhance the model's robustness. RCDM leverages the collaborative interaction between two neural networks, along with optimal control strategies derived from control theory, to optimize the weights of two networks during the sampling process. Unlike conventional techniques, RCDM establishes a mathematical relationship between fixed errors and the weights of the two neural networks without incurring additional computational overhead. Extensive experiments were conducted on MNIST and CIFAR-10 datasets, and the results demonstrate the effectiveness and adaptability of our proposed model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge