Ranking for Individual and Group Fairness Simultaneously

Paper and Code

Sep 24, 2020

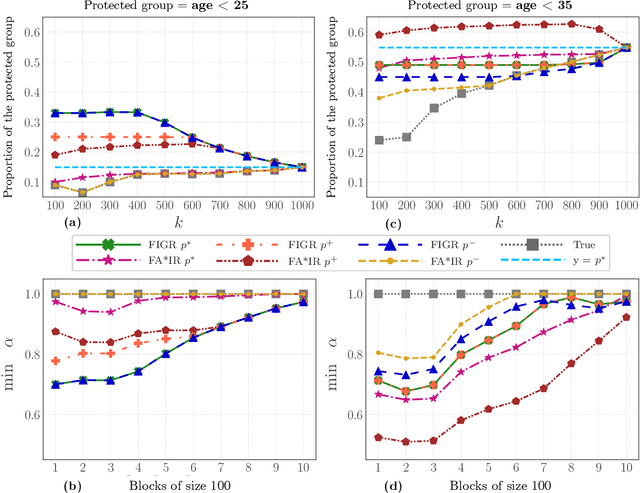

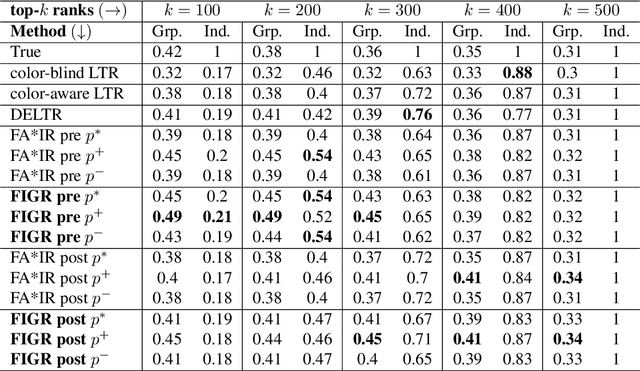

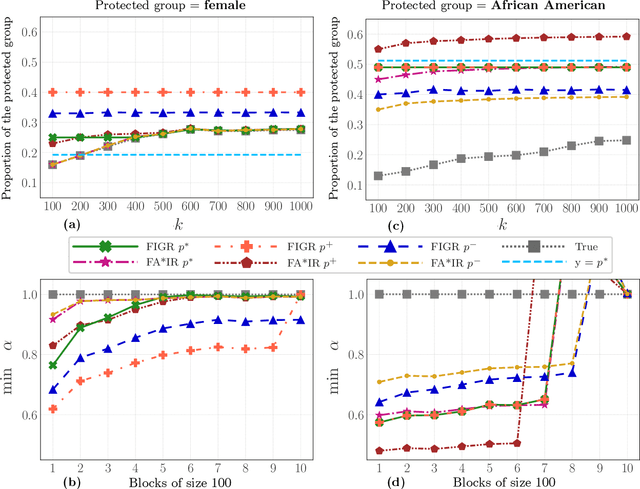

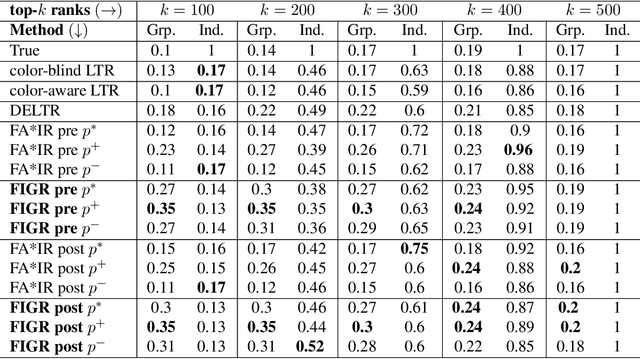

Search and recommendation systems, such as search engines, recruiting tools, online marketplaces, news, and social media, output ranked lists of content, products, and sometimes, people. Credit ratings, standardized tests, risk assessments output only a score, but are also used implicitly for ranking. Bias in such ranking systems, especially among the top ranks, can worsen social and economic inequalities, polarize opinions, and reinforce stereotypes. On the other hand, a bias correction for minority groups can cause more harm if perceived as favoring group-fair outcomes over meritocracy. In this paper, we study a trade-off between individual fairness and group fairness in ranking. We define individual fairness based on how close the predicted rank of each item is to its true rank, and prove a lower bound on the trade-off achievable for simultaneous individual and group fairness in ranking. We give a fair ranking algorithm that takes any given ranking and outputs another ranking with simultaneous individual and group fairness guarantees comparable to the lower bound we prove. Our algorithm can be used to both pre-process training data as well as post-process the output of existing ranking algorithms. Our experimental results show that our algorithm performs better than the state-of-the-art fair learning to rank and fair post-processing baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge