Quantitative Impact of Label Noise on the Quality of Segmentation of Brain Tumors on MRI scans

Paper and Code

Sep 18, 2019

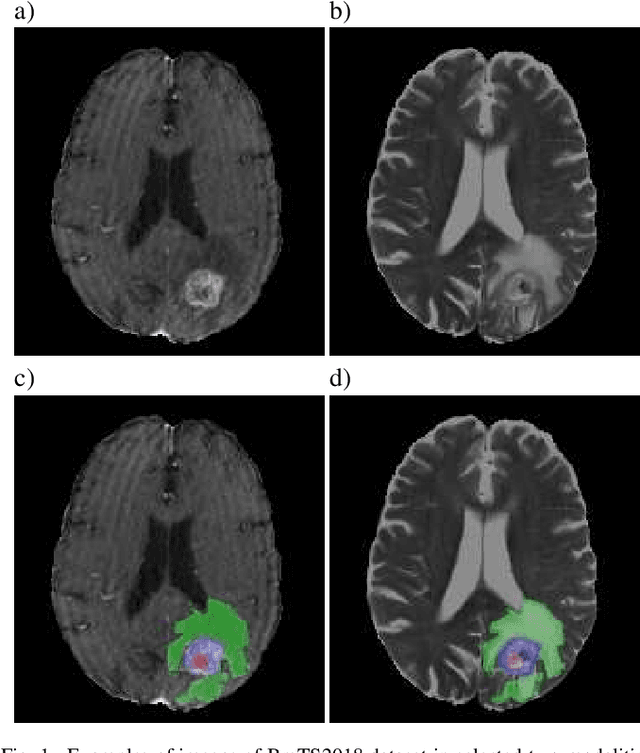

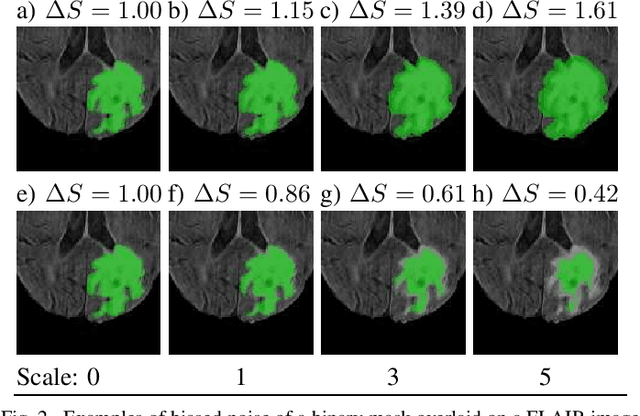

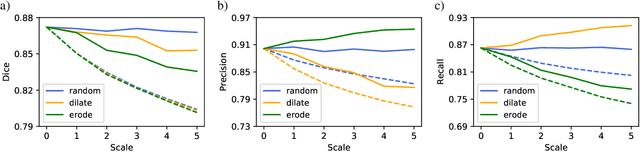

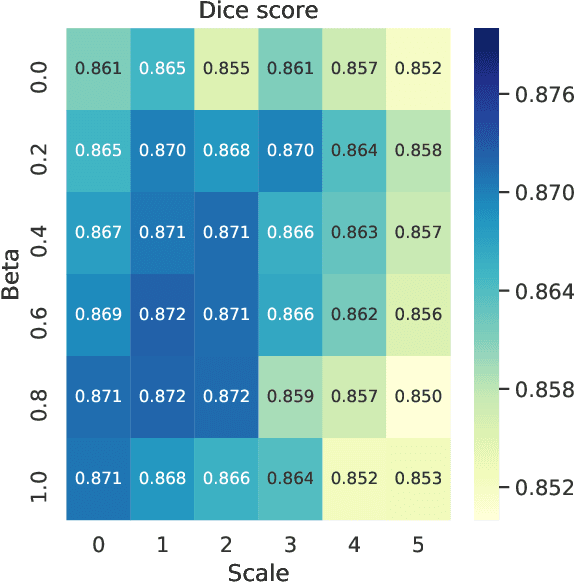

Over the last few years, deep learning has proven to be a great solution to many problems, such as image or text classification. Recently, deep learning-based solutions have outperformed humans on selected benchmark datasets, yielding a promising future for scientific and real-world applications. Training of deep learning models requires vast amounts of high quality data to achieve such supreme performance. In real-world scenarios, obtaining a large, coherent, and properly labeled dataset is a challenging task. This is especially true in medical applications, where high-quality data and annotations are scarce and the number of expert annotators is limited. In this paper, we investigate the impact of corrupted ground-truth masks on the performance of a neural network for a brain tumor segmentation task. Our findings suggest that a) the performance degrades about 8% less than it could be expected from simulations, b) a neural network learns the simulated biases of annotators, c) biases can be partially mitigated by using an inversely-biased dice loss function.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge