Quadruped Locomotion on Non-Rigid Terrain using Reinforcement Learning

Paper and Code

Jul 07, 2021

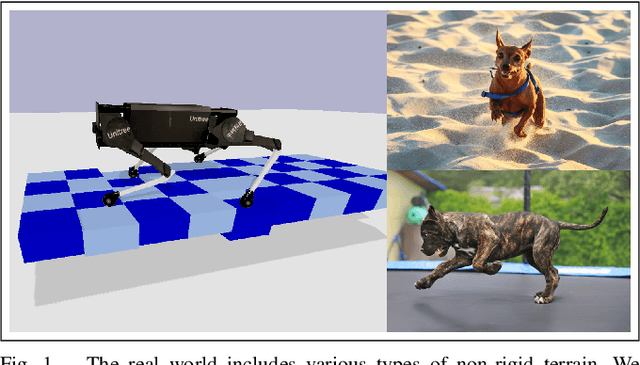

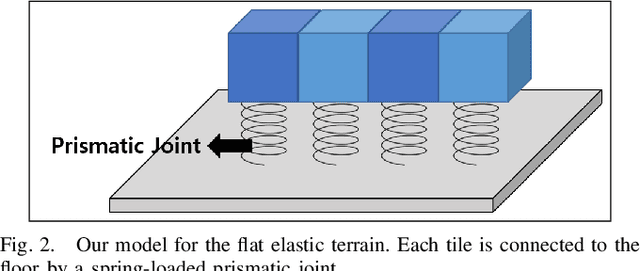

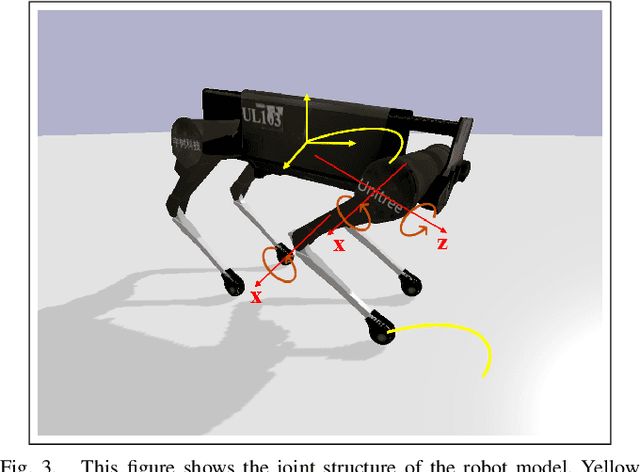

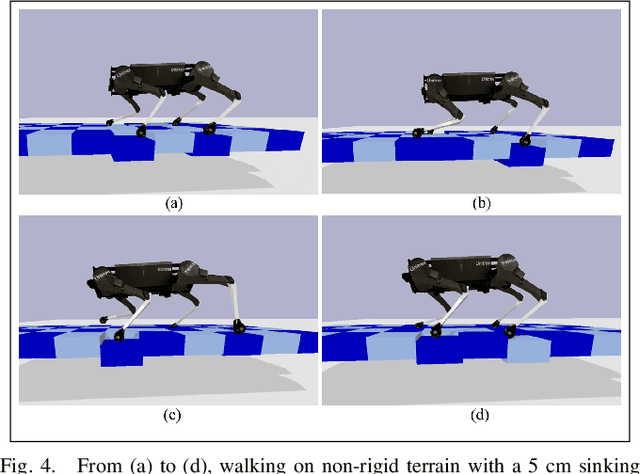

Legged robots need to be capable of walking on diverse terrain conditions. In this paper, we present a novel reinforcement learning framework for learning locomotion on non-rigid dynamic terrains. Specifically, our framework can generate quadruped locomotion on flat elastic terrain that consists of a matrix of tiles moving up and down passively when pushed by the robot's feet. A trained robot with 55cm base length can walk on terrain that can sink up to 5cm. We propose a set of observation and reward terms that enable this locomotion; in which we found that it is crucial to include the end-effector history and end-effector velocity terms into observation. We show the effectiveness of our method by training the robot with various terrain conditions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge