QSAN: A Near-term Achievable Quantum Self-Attention Network

Paper and Code

Jul 18, 2022

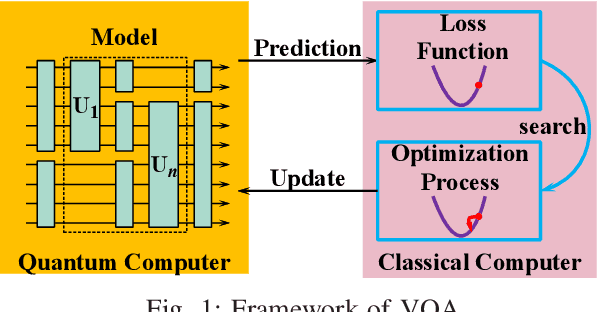

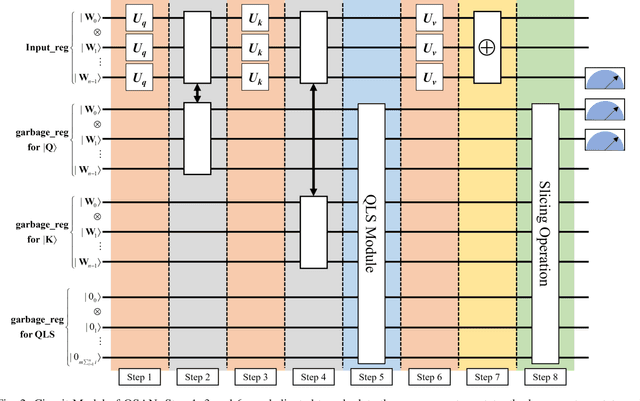

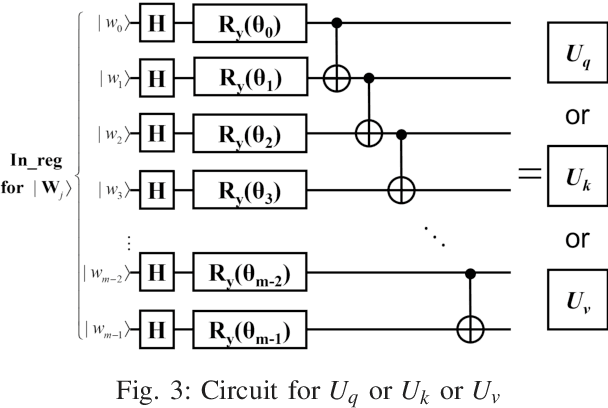

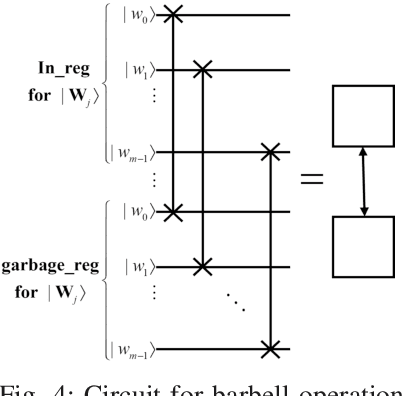

Self-Attention Mechanism (SAM), an important component of machine learning, has been relatively little investigated in the field of quantum machine learning. Inspired by the Variational Quantum Algorithm (VQA) framework and SAM, Quantum Self-Attention Network (QSAN) that can be implemented on a near-term quantum computer is proposed.Theoretically, Quantum Self-Attention Mechanism (QSAM), a novel interpretation of SAM with linearization and logicalization is defined, in which Quantum Logical Similarity (QLS) is presented firstly to impel a better execution of QSAM on quantum computers since inner product operations are replaced with logical operations, and then a QLS-based density matrix named Quantum Bit Self-Attention Score Matrix (QBSASM) is deduced for representing the output distribution effectively. Moreover, QSAN is implemented based on the QSAM framework and its practical quantum circuit is designed with 5 modules. Finally, QSAN is tested on a quantum computer with a small sample of data. The experimental results show that QSAN can converge faster in the quantum natural gradient descent framework and reassign weights to word vectors. The above illustrates that QSAN is able to provide attention with quantum characteristics faster, laying the foundation for Quantum Natural Language Processing (QNLP).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge