Private Truly-Everlasting Robust-Prediction

Paper and Code

Jan 09, 2024

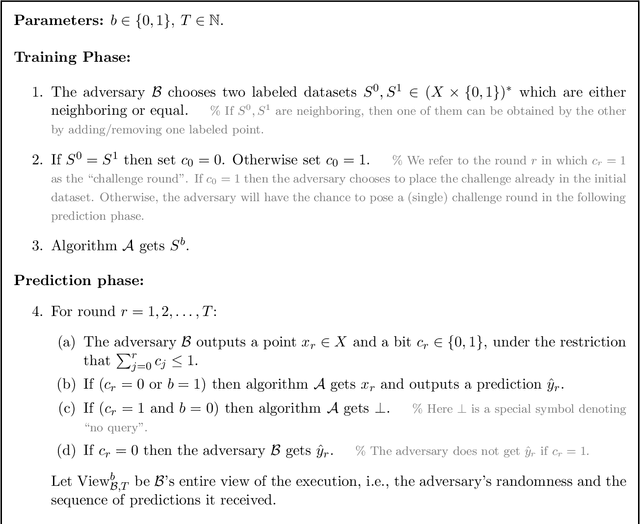

Private Everlasting Prediction (PEP), recently introduced by Naor et al. [2023], is a model for differentially private learning in which the learner never publicly releases a hypothesis. Instead, it provides black-box access to a "prediction oracle" that can predict the labels of an endless stream of unlabeled examples drawn from the underlying distribution. Importantly, PEP provides privacy both for the initial training set and for the endless stream of classification queries. We present two conceptual modifications to the definition of PEP, as well as new constructions exhibiting significant improvements over prior work. Specifically, (1) Robustness: PEP only guarantees accuracy provided that all the classification queries are drawn from the correct underlying distribution. A few out-of-distribution queries might break the validity of the prediction oracle for future queries, even for future queries which are sampled from the correct distribution. We incorporate robustness against such poisoning attacks into the definition of PEP, and show how to obtain it. (2) Dependence of the privacy parameter $\delta$ in the time horizon: We present a relaxed privacy definition, suitable for PEP, that allows us to disconnect the privacy parameter $\delta$ from the number of total time steps $T$. This allows us to obtain algorithms for PEP whose sample complexity is independent from $T$, thereby making them "truly everlasting". This is in contrast to prior work where the sample complexity grows with $polylog(T)$. (3) New constructions: Prior constructions for PEP exhibit sample complexity that is quadratic in the VC dimension of the target class. We present new constructions of PEP for axis-aligned rectangles and for decision-stumps that exhibit sample complexity linear in the dimension (instead of quadratic). We show that our constructions satisfy very strong robustness properties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge