Privacy-Preserving Dynamic Assortment Selection

Paper and Code

Oct 29, 2024

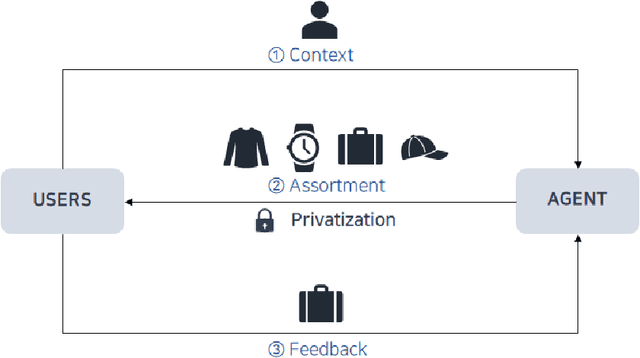

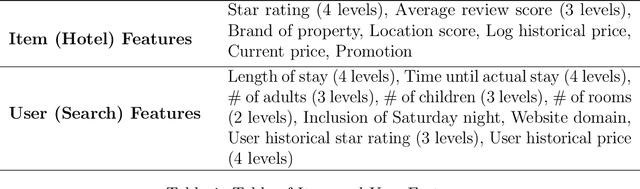

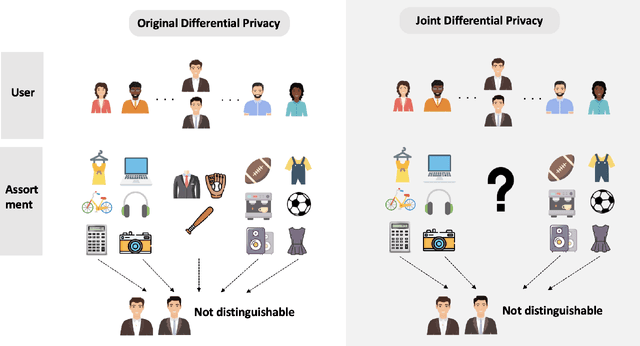

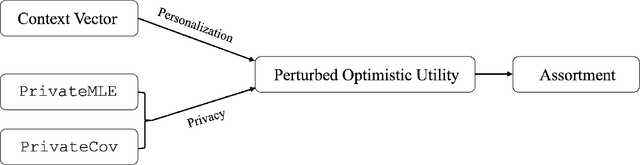

With the growing demand for personalized assortment recommendations, concerns over data privacy have intensified, highlighting the urgent need for effective privacy-preserving strategies. This paper presents a novel framework for privacy-preserving dynamic assortment selection using the multinomial logit (MNL) bandits model. Our approach employs a perturbed upper confidence bound method, integrating calibrated noise into user utility estimates to balance between exploration and exploitation while ensuring robust privacy protection. We rigorously prove that our policy satisfies Joint Differential Privacy (JDP), which better suits dynamic environments than traditional differential privacy, effectively mitigating inference attack risks. This analysis is built upon a novel objective perturbation technique tailored for MNL bandits, which is also of independent interest. Theoretically, we derive a near-optimal regret bound of $\tilde{O}(\sqrt{T})$ for our policy and explicitly quantify how privacy protection impacts regret. Through extensive simulations and an application to the Expedia hotel dataset, we demonstrate substantial performance enhancements over the benchmark method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge