Priority-based Post-Processing Bias Mitigation for Individual and Group Fairness

Paper and Code

Jan 31, 2021

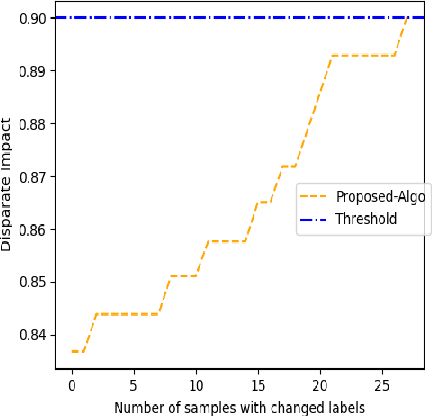

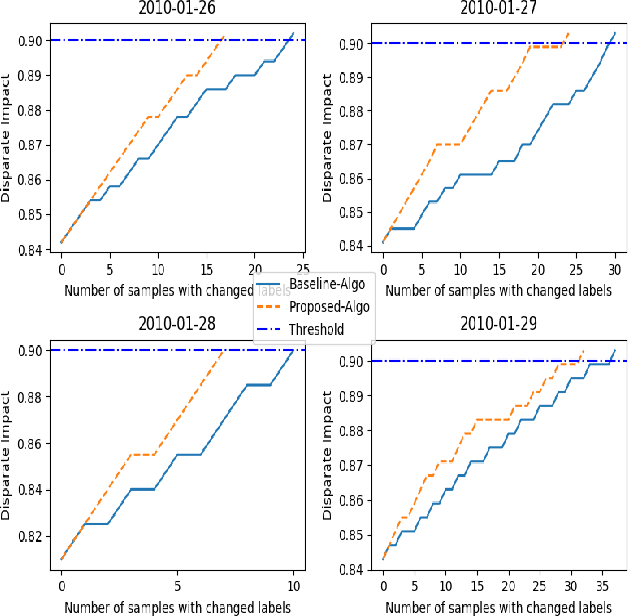

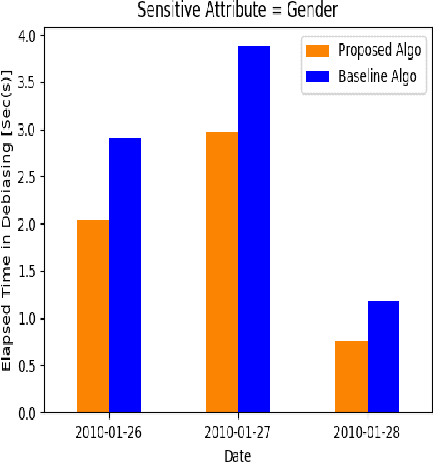

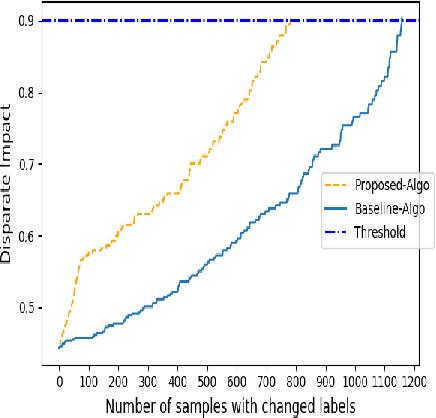

Previous post-processing bias mitigation algorithms on both group and individual fairness don't work on regression models and datasets with multi-class numerical labels. We propose a priority-based post-processing bias mitigation on both group and individual fairness with the notion that similar individuals should get similar outcomes irrespective of socio-economic factors and more the unfairness, more the injustice. We establish this proposition by a case study on tariff allotment in a smart grid. Our novel framework establishes it by using a user segmentation algorithm to capture the consumption strategy better. This process ensures priority-based fair pricing for group and individual facing the maximum injustice. It upholds the notion of fair tariff allotment to the entire population taken into consideration without modifying the in-built process for tariff calculation. We also validate our method and show superior performance to previous work on a real-world dataset in criminal sentencing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge