Preserving Privacy and Security in Federated Learning

Paper and Code

Feb 07, 2022

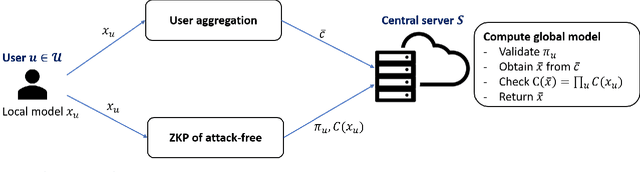

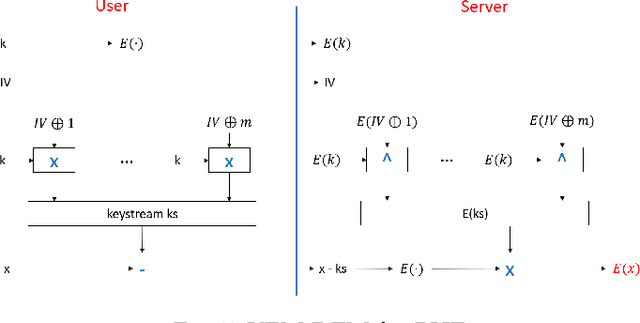

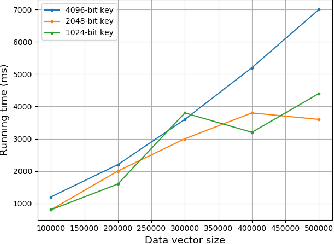

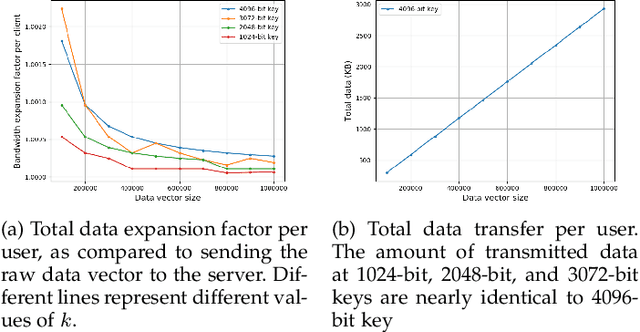

Federated learning is known to be vulnerable to security and privacy issues. Existing research has focused either on preventing poisoning attacks from users or on protecting user privacy of model updates. However, integrating these two lines of research remains a crucial challenge since they often conflict with one another with respect to the threat model. In this work, we develop a framework to combine secure aggregation with defense mechanisms against poisoning attacks from users, while maintaining their respective privacy guarantees. We leverage zero-knowledge proof protocol to let users run the defense mechanisms locally and attest the result to the central server without revealing any information about their model updates. Furthermore, we propose a new secure aggregation protocol for federated learning using homomorphic encryption that is robust against malicious users. Our framework enables the central server to identify poisoned model updates without violating the privacy guarantees of secure aggregation. Finally, we analyze the computation and communication complexity of our proposed solution and benchmark its performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge