Polyphonic Music Composition with LSTM Neural Networks and Reinforcement Learning

Paper and Code

Mar 03, 2019

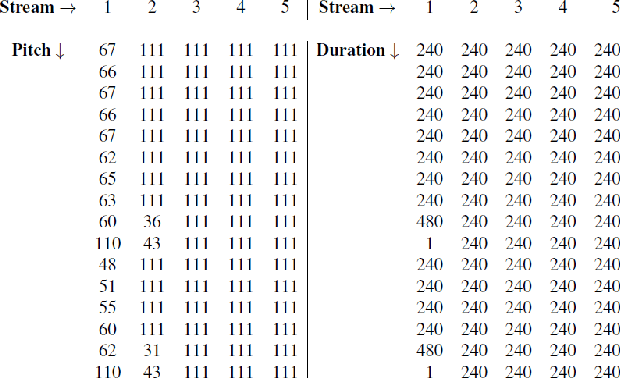

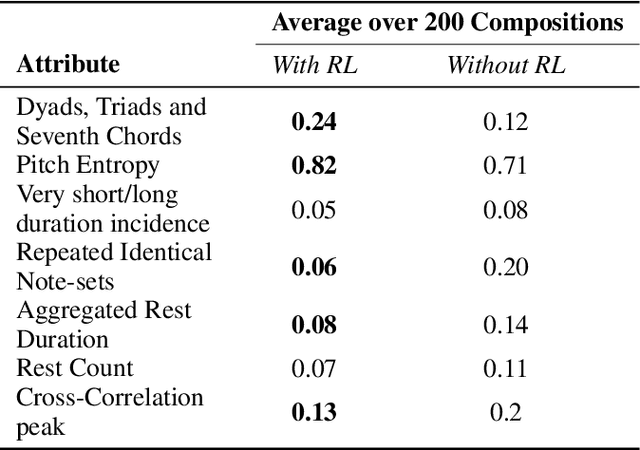

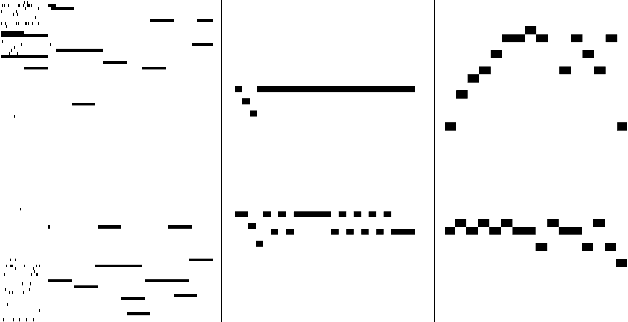

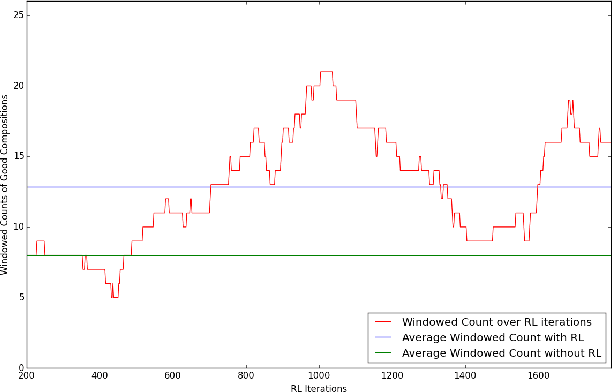

In the domain of algorithmic music composition, machine learning-driven systems eliminate the need for carefully hand-crafting rules for composition. In particular, the capability of recurrent neural networks to learn complex temporal patterns lends itself well to the musical domain. Promising results have been observed across a number of recent attempts at music composition using deep RNNs. These approaches generally aim at first training neural networks to reproduce subsequences drawn from existing songs. Subsequently, they are used to compose music either at the audio sample-level or at the note-level. We designed a representation that divides polyphonic music into a small number of monophonic streams. This representation greatly reduces the complexity of the problem and eliminates an exponential number of probably poor compositions. On top of our LSTM neural network that learnt musical sequences in this representation, we built an RL agent that learnt to find combinations of songs whose joint dominance produced pleasant compositions. We present Amadeus, an algorithmic music composition system that composes music that consists of intricate melodies, basic chords, and even occasional contrapuntal sequences.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge