Pixel-Level Self-Paced Learning for Super-Resolution

Paper and Code

Mar 09, 2020

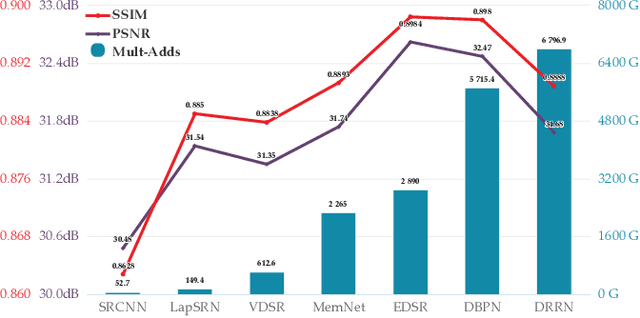

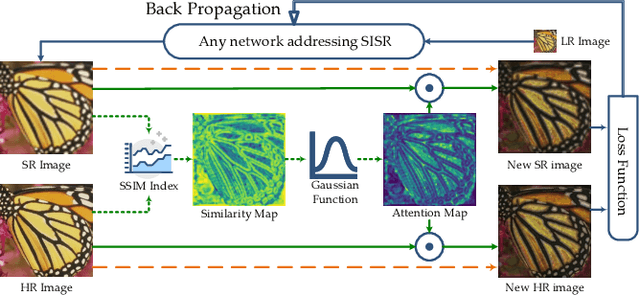

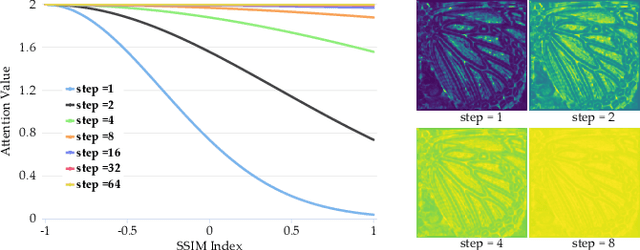

Recently, lots of deep networks are proposed to improve the quality of predicted super-resolution (SR) images, due to its widespread use in several image-based fields. However, with these networks being constructed deeper and deeper, they also cost much longer time for training, which may guide the learners to local optimization. To tackle this problem, this paper designs a training strategy named Pixel-level Self-Paced Learning (PSPL) to accelerate the convergence velocity of SISR models. PSPL imitating self-paced learning gives each pixel in the predicted SR image and its corresponding pixel in ground truth an attention weight, to guide the model to a better region in parameter space. Extensive experiments proved that PSPL could speed up the training of SISR models, and prompt several existing models to obtain new better results. Furthermore, the source code is available at https://github.com/Elin24/PSPL.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge