Phase Transitions in Image Denoising via Sparsely Coding Convolutional Neural Networks

Paper and Code

Oct 26, 2017

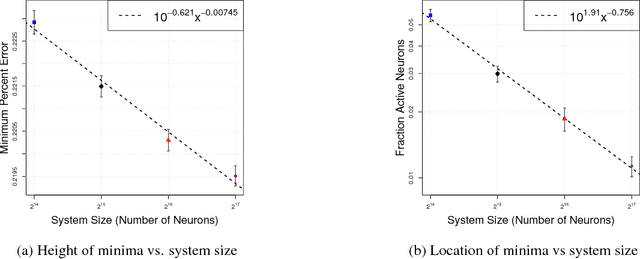

Neural networks are analogous in many ways to spin glasses, systems which are known for their rich set of dynamics and equally complex phase diagrams. We apply well-known techniques in the study of spin glasses to a convolutional sparsely encoding neural network and observe power law finite-size scaling behavior in the sparsity and reconstruction error as the network denoises 32$\times$32 RGB CIFAR-10 images. This finite-size scaling indicates the presence of a continuous phase transition at a critical value of this sparsity. By using the power law scaling relations inherent to finite-size scaling, we can determine the optimal value of sparsity for any network size by tuning the system to the critical point and operate the system at the minimum denoising error.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge