PGX: A Multi-level GNN Explanation Framework Based on Separate Knowledge Distillation Processes

Paper and Code

Aug 05, 2022

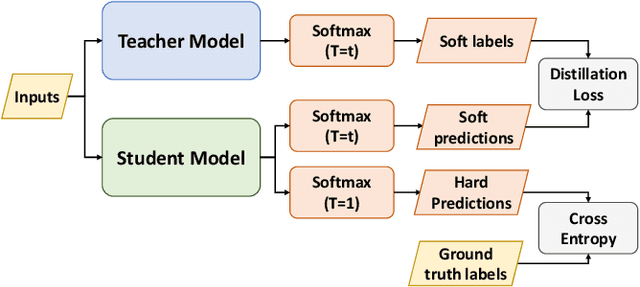

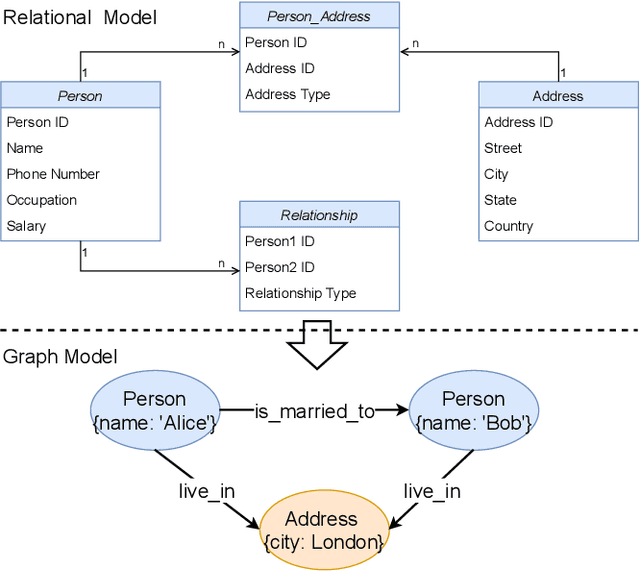

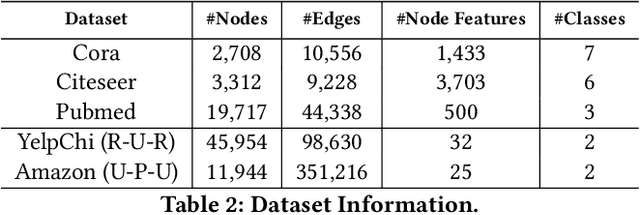

Graph Neural Networks (GNNs) are widely adopted in advanced AI systems due to their capability of representation learning on graph data. Even though GNN explanation is crucial to increase user trust in the systems, it is challenging due to the complexity of GNN execution. Lately, many works have been proposed to address some of the issues in GNN explanation. However, they lack generalization capability or suffer from computational burden when the size of graphs is enormous. To address these challenges, we propose a multi-level GNN explanation framework based on an observation that GNN is a multimodal learning process of multiple components in graph data. The complexity of the original problem is relaxed by breaking into multiple sub-parts represented as a hierarchical structure. The top-level explanation aims at specifying the contribution of each component to the model execution and predictions, while fine-grained levels focus on feature attribution and graph structure attribution analysis based on knowledge distillation. Student models are trained in standalone modes and are responsible for capturing different teacher behaviors, later used for particular component interpretation. Besides, we also aim for personalized explanations as the framework can generate different results based on user preferences. Finally, extensive experiments demonstrate the effectiveness and fidelity of our proposed approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge