Permutohedral-GCN: Graph Convolutional Networks with Global Attention

Paper and Code

Mar 02, 2020

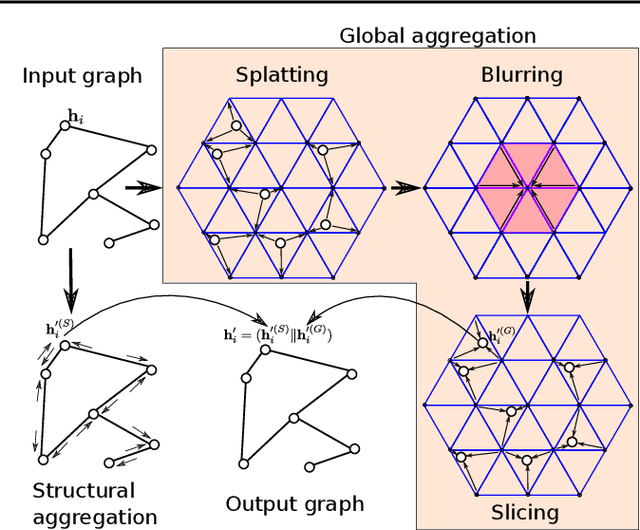

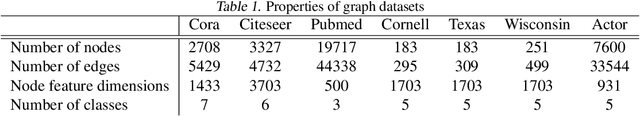

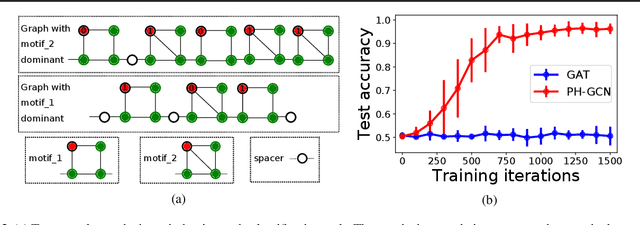

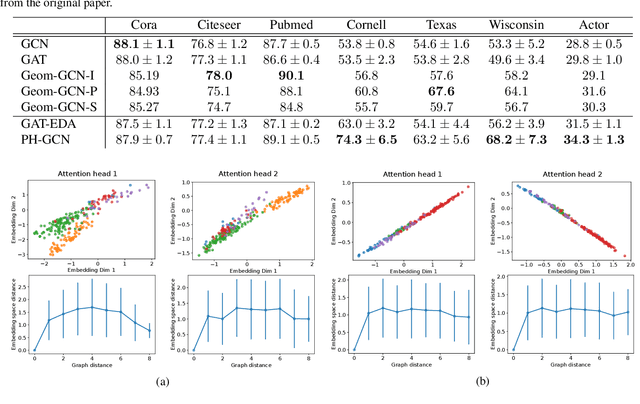

Graph convolutional networks (GCNs) update a node's feature vector by aggregating features from its neighbors in the graph. This ignores potentially useful contributions from distant nodes. Identifying such useful distant contributions is challenging due to scalability issues (too many nodes can potentially contribute) and oversmoothing (aggregating features from too many nodes risks swamping out relevant information and may result in nodes having different labels but indistinguishable features). We introduce a global attention mechanism where a node can selectively attend to, and aggregate features from, any other node in the graph. The attention coefficients depend on the Euclidean distance between learnable node embeddings, and we show that the resulting attention-based global aggregation scheme is analogous to high-dimensional Gaussian filtering. This makes it possible to use efficient approximate Gaussian filtering techniques to implement our attention-based global aggregation scheme. By employing an approximate filtering method based on the permutohedral lattice, the time complexity of our proposed global aggregation scheme only grows linearly with the number of nodes. The resulting GCNs, which we term permutohedral-GCNs, are differentiable and trained end-to-end, and they achieve state of the art performance on several node classification benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge