People infer recursive visual concepts from just a few examples

Paper and Code

Apr 17, 2019

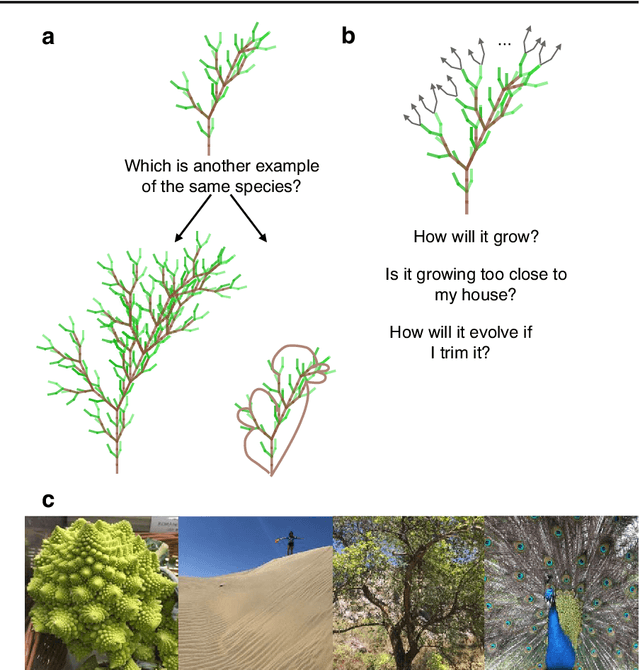

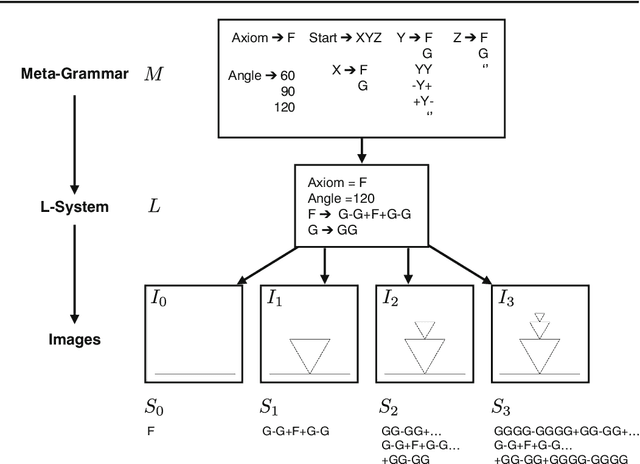

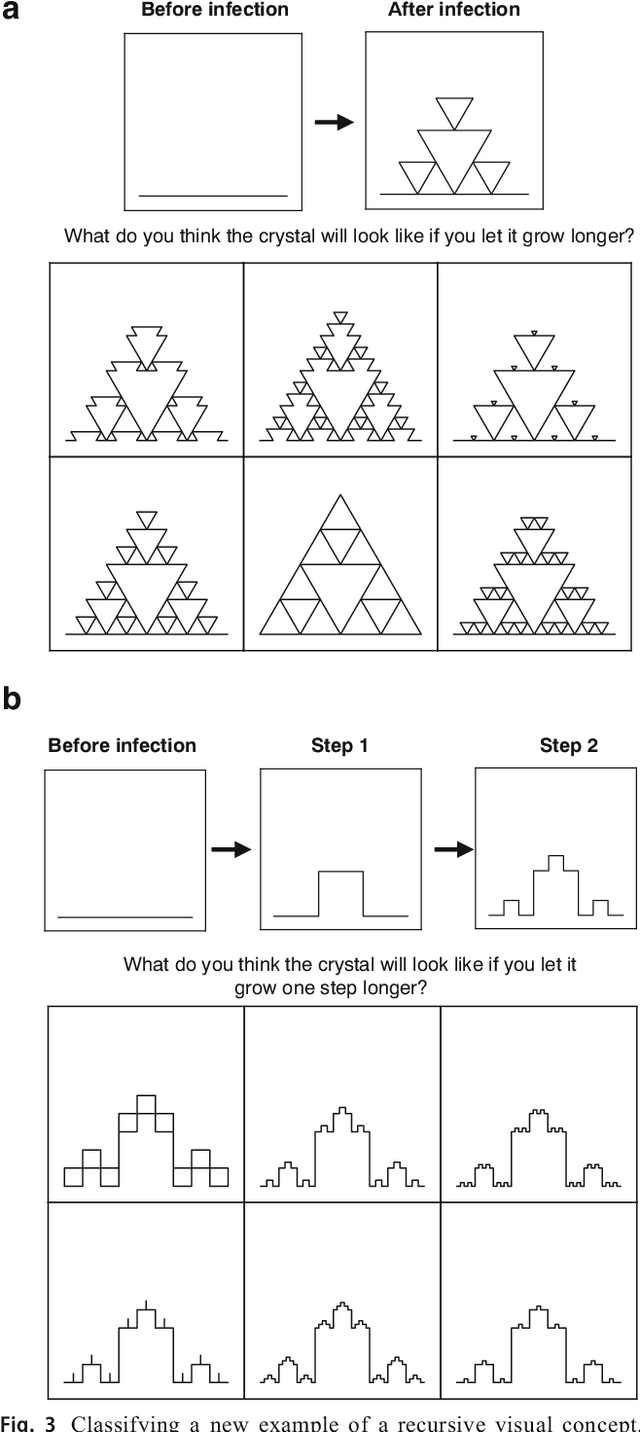

Machine learning has made major advances in categorizing objects in images, yet the best algorithms miss important aspects of how people learn and think about categories. People can learn richer concepts from fewer examples, including causal models that explain how members of a category are formed. Here, we explore the limits of this human ability to infer causal "programs" -- latent generating processes with nontrivial algorithmic properties -- from one, two, or three visual examples. People were asked to extrapolate the programs in several ways, for both classifying and generating new examples. As a theory of these inductive abilities, we present a Bayesian program learning model that searches the space of programs for the best explanation of the observations. Although variable, people's judgments are broadly consistent with the model and inconsistent with several alternatives, including a pre-trained deep neural network for object recognition, indicating that people can learn and reason with rich algorithmic abstractions from sparse input data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge