Parallel Intent and Slot Prediction using MLB Fusion

Paper and Code

Mar 20, 2020

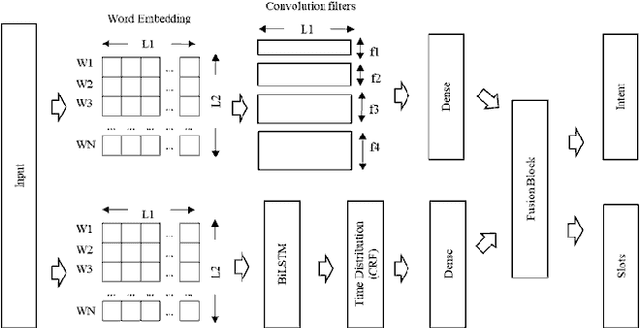

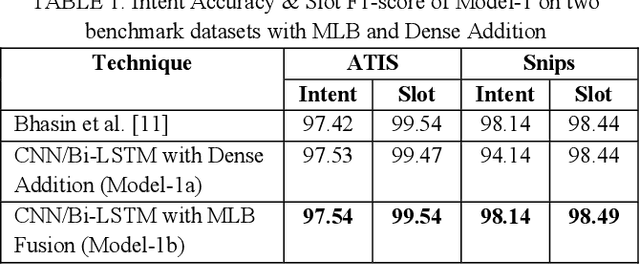

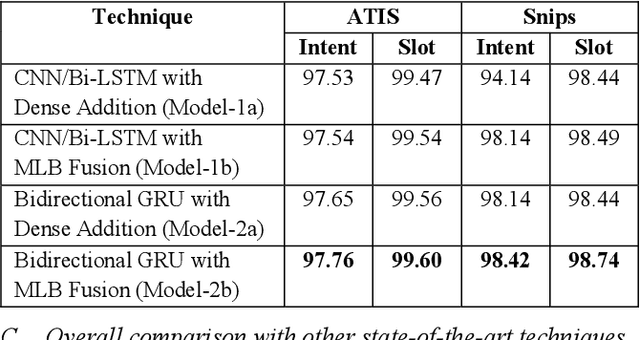

Intent and Slot Identification are two important tasks in Spoken Language Understanding (SLU). For a natural language utterance, there is a high correlation between these two tasks. A lot of work has been done on each of these using Recurrent-Neural-Networks (RNN), Convolution Neural Networks (CNN) and Attention based models. Most of the past work used two separate models for intent and slot prediction. Some of them also used sequence-to-sequence type models where slots are predicted after evaluating the utterance-level intent. In this work, we propose a parallel Intent and Slot Prediction technique where separate Bidirectional Gated Recurrent Units (GRU) are used for each task. We posit the usage of MLB (Multimodal Low-rank Bilinear Attention Network) fusion for improvement in performance of intent and slot learning. To the best of our knowledge, this is the first attempt of using such a technique on text based problems. Also, our proposed methods outperform the existing state-of-the-art results for both intent and slot prediction on two benchmark datasets

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge