Optimizing Prediction Intervals by Tuning Random Forest via Meta-Validation

Paper and Code

Jan 22, 2018

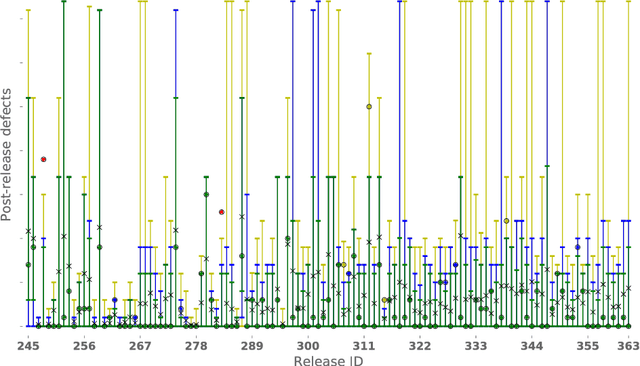

Recent studies have shown that tuning prediction models increases prediction accuracy and that Random Forest can be used to construct prediction intervals. However, to our best knowledge, no study has investigated the need to, and the manner in which one can, tune Random Forest for optimizing prediction intervals { this paper aims to fill this gap. We explore a tuning approach that combines an effectively exhaustive search with a validation technique on a single Random Forest parameter. This paper investigates which, out of eight validation techniques, are beneficial for tuning, i.e., which automatically choose a Random Forest configuration constructing prediction intervals that are reliable and with a smaller width than the default configuration. Additionally, we present and validate three meta-validation techniques to determine which are beneficial, i.e., those which automatically chose a beneficial validation technique. This study uses data from our industrial partner (Keymind Inc.) and the Tukutuku Research Project, related to post-release defect prediction and Web application effort estimation, respectively. Results from our study indicate that: i) the default configuration is frequently unreliable, ii) most of the validation techniques, including previously successfully adopted ones such as 50/50 holdout and bootstrap, are counterproductive in most of the cases, and iii) the 75/25 holdout meta-validation technique is always beneficial; i.e., it avoids the likely counterproductive effects of validation techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge