Optimizing Automatic Summarization of Long Clinical Records Using Dynamic Context Extension:Testing and Evaluation of the NBCE Method

Paper and Code

Nov 14, 2024

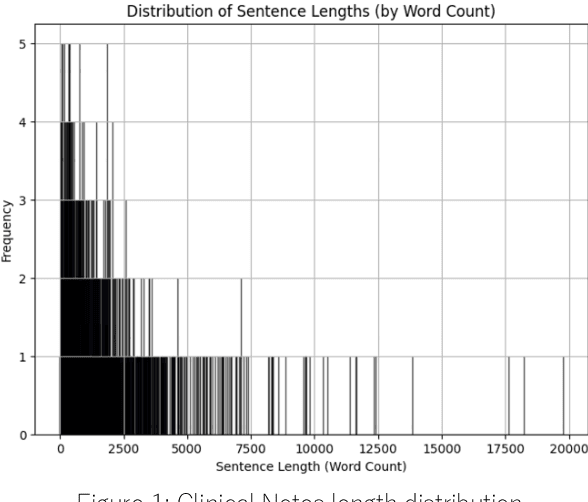

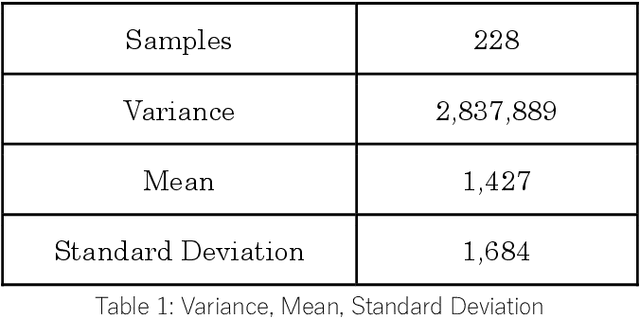

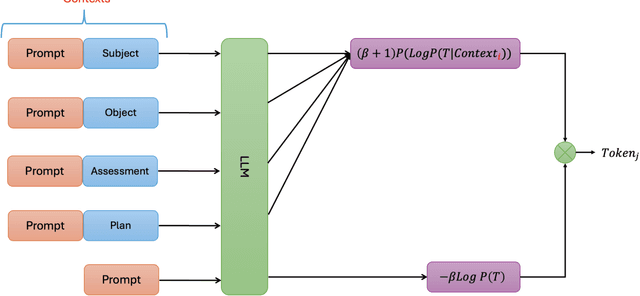

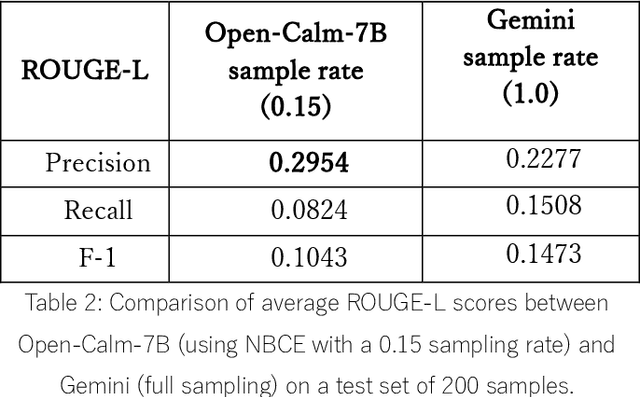

Summarizing patient clinical notes is vital for reducing documentation burdens. Current manual summarization makes medical staff struggle. We propose an automatic method using LLMs, but long inputs cause LLMs to lose context, reducing output quality especially in small size model. We used a 7B model, open-calm-7b, enhanced with Native Bayes Context Extend and a redesigned decoding mechanism to reference one sentence at a time, keeping inputs within context windows, 2048 tokens. Our improved model achieved near parity with Google's over 175B Gemini on ROUGE-L metrics with 200 samples, indicating strong performance using less resources, enhancing automated EMR summarization feasibility.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge