Optimal Approximation Complexity of High-Dimensional Functions with Neural Networks

Paper and Code

Jan 30, 2023

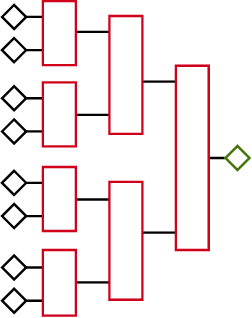

We investigate properties of neural networks that use both ReLU and $x^2$ as activation functions and build upon previous results to show that both analytic functions and functions in Sobolev spaces can be approximated by such networks of constant depth to arbitrary accuracy, demonstrating optimal order approximation rates across all nonlinear approximators, including standard ReLU networks. We then show how to leverage low local dimensionality in some contexts to overcome the curse of dimensionality, obtaining approximation rates that are optimal for unknown lower-dimensional subspaces.

* 10 pages, 1 figure

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge