Online Pricing with Offline Data: Phase Transition and Inverse Square Law

Paper and Code

Nov 06, 2019

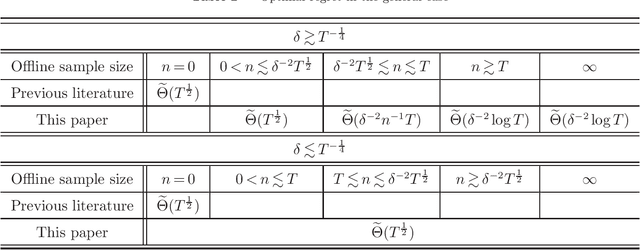

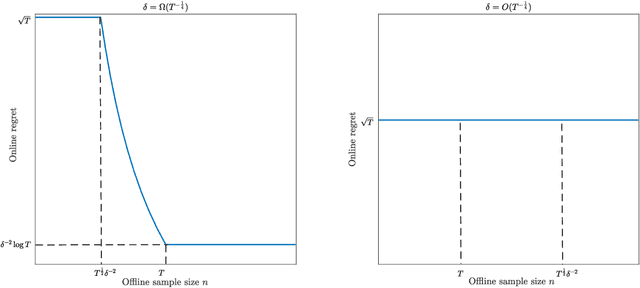

This paper investigates the impact of pre-existing offline data on online learning, in the context of dynamic pricing. We study a single-product dynamic pricing problem over a selling horizon of $T$ periods. The demand in each period is determined by the price of the product according to a linear demand model with unknown parameters. We assume that the seller already has some pre-existing offline data before the start of the selling horizon. The offline data set contains $n$ samples, each of which is an input-output pair consists of a historical price and an associated demand observation. The seller wants to utilize both the pre-existing offline data and the sequential online data to minimize the regret of the online learning process. We characterize the joint effect of the size, location and dispersion of offline data on the optimal regret of the online learning process. Specifically, the size, location and dispersion of offline data are measured by the number of historical samples $n$, the absolute difference between the average historical price and the optimal price $\delta$, and the standard deviation of the historical prices $\sigma$, respectively. We show that the best achievable regret is $\tilde\Theta\left(\sqrt{T}\wedge\frac{T}{n\sigma^2+(n\wedge T)\delta^2}\right)$. We design a learning algorithm based on the "optimism in the face of uncertainty" principle, whose regret is optimal up to a logarithmic factor. Our results reveal surprising transformations of the optimal regret rate with respect to the size of offline data, which we refer to as phase transitions. In addition, our results demonstrate that the location and dispersion of the offline data set also have an intrinsic effect on the optimal regret, and we quantify this effect via the inverse-square law.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge