Online Domain Adaptation for Multi-Object Tracking

Paper and Code

Aug 04, 2015

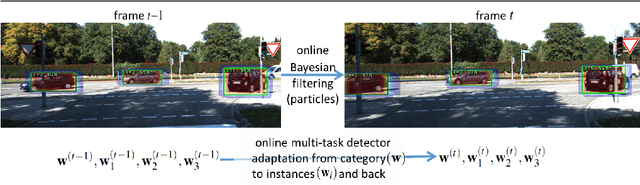

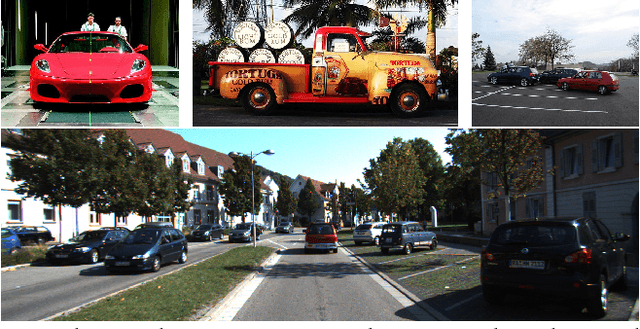

Automatically detecting, labeling, and tracking objects in videos depends first and foremost on accurate category-level object detectors. These might, however, not always be available in practice, as acquiring high-quality large scale labeled training datasets is either too costly or impractical for all possible real-world application scenarios. A scalable solution consists in re-using object detectors pre-trained on generic datasets. This work is the first to investigate the problem of on-line domain adaptation of object detectors for causal multi-object tracking (MOT). We propose to alleviate the dataset bias by adapting detectors from category to instances, and back: (i) we jointly learn all target models by adapting them from the pre-trained one, and (ii) we also adapt the pre-trained model on-line. We introduce an on-line multi-task learning algorithm to efficiently share parameters and reduce drift, while gradually improving recall. Our approach is applicable to any linear object detector, and we evaluate both cheap "mini-Fisher Vectors" and expensive "off-the-shelf" ConvNet features. We quantitatively measure the benefit of our domain adaptation strategy on the KITTI tracking benchmark and on a new dataset (PASCAL-to-KITTI) we introduce to study the domain mismatch problem in MOT.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge