On the role of benchmarking data sets and simulations in method comparison studies

Paper and Code

Aug 02, 2022

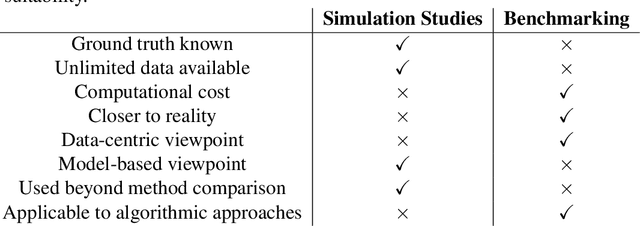

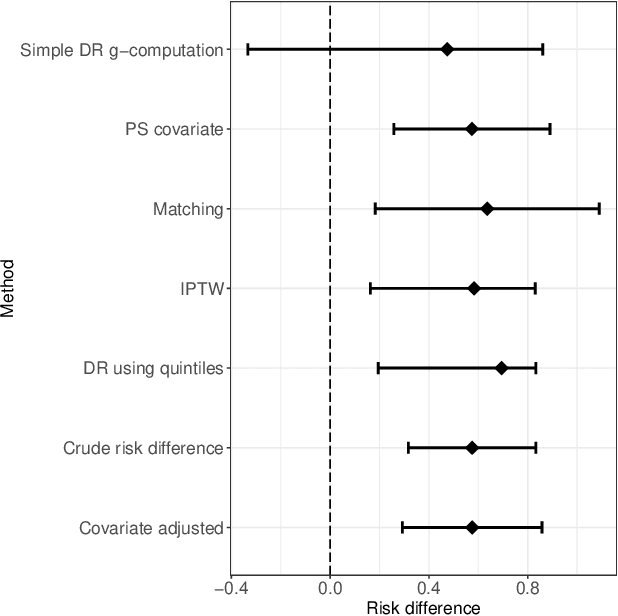

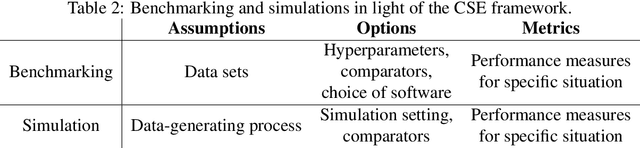

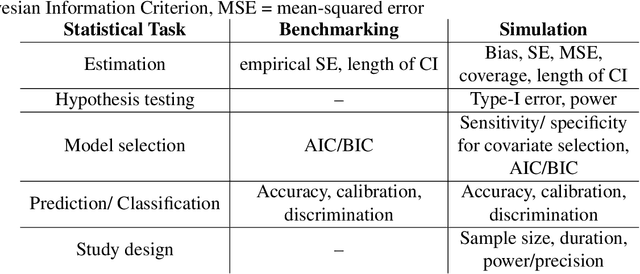

Method comparisons are essential to provide recommendations and guidance for applied researchers, who often have to choose from a plethora of available approaches. While many comparisons exist in the literature, these are often not neutral but favour a novel method. Apart from the choice of design and a proper reporting of the findings, there are different approaches concerning the underlying data for such method comparison studies. Most manuscripts on statistical methodology rely on simulation studies and provide a single real-world data set as an example to motivate and illustrate the methodology investigated. In the context of supervised learning, in contrast, methods are often evaluated using so-called benchmarking data sets, i.e. real-world data that serve as gold standard in the community. Simulation studies, on the other hand, are much less common in this context. The aim of this paper is to investigate differences and similarities between these approaches, to discuss their advantages and disadvantages and ultimately to develop new approaches to the evaluation of methods picking the best of both worlds. To this aim, we borrow ideas from different contexts such as mixed methods research and Clinical Scenario Evaluation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge