On the Optimality of Trees Generated by ID3

Paper and Code

Jul 11, 2019

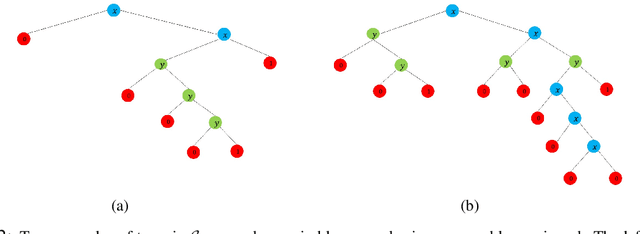

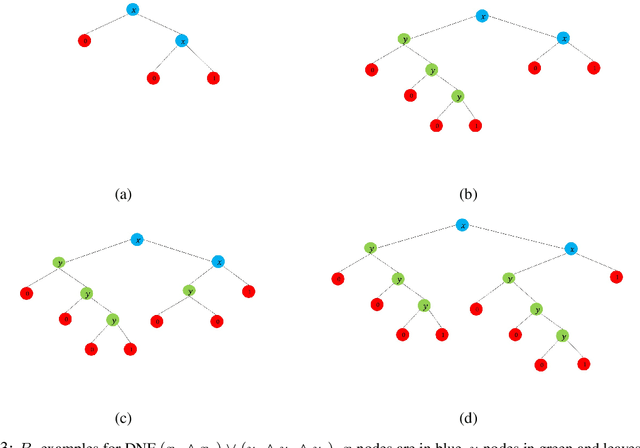

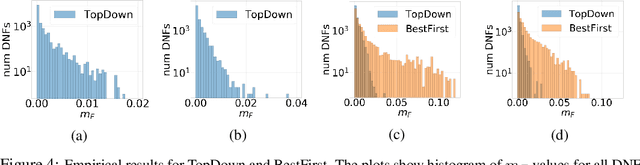

Since its inception in the 1980s, ID3 has become one of the most successful and widely used algorithms for learning decision trees. However, its theoretical properties remain poorly understood. In this work, we analyze the heuristic of growing a decision tree with ID3 for a limited number of iterations $t$ and given that nodes are split as in the case of exact information gain and probability computations. In several settings, we provide theoretical and empirical evidence that the TopDown variant of ID3, introduced by Kearns and Mansour (1996), produces trees with optimal or near-optimal test error among all trees with $t$ internal nodes. We prove optimality in the case of learning conjunctions under product distributions and learning read-once DNFs with 2 terms under the uniform distribition. Using efficient dynamic programming algorithms, we empirically show that TopDown generates trees that are near-optimal ($\sim \%1$ difference from optimal test error) in a large number of settings for learning read-once DNFs under product distributions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge