On the Generalization of Deep Reinforcement Learning Methods in the Problem of Local Navigation

Paper and Code

Sep 28, 2022

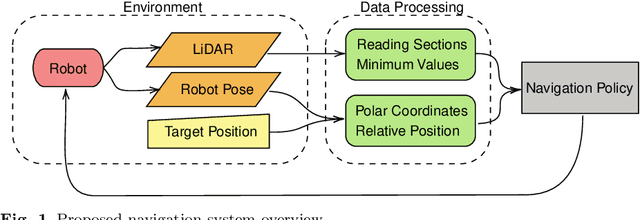

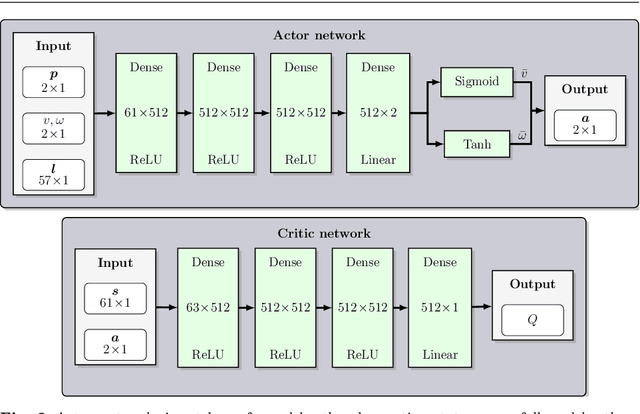

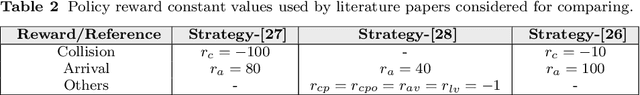

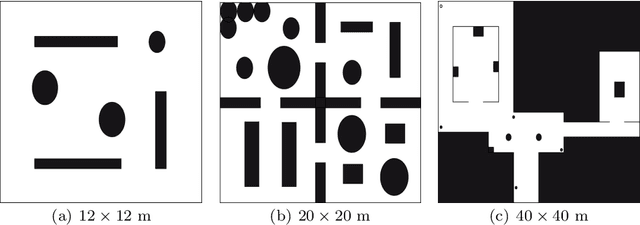

In this paper, we study the application of DRL algorithms in the context of local navigation problems, in which a robot moves towards a goal location in unknown and cluttered workspaces equipped only with limited-range exteroceptive sensors, such as LiDAR. Collision avoidance policies based on DRL present some advantages, but they are quite susceptible to local minima, once their capacity to learn suitable actions is limited to the sensor range. Since most robots perform tasks in unstructured environments, it is of great interest to seek generalized local navigation policies capable of avoiding local minima, especially in untrained scenarios. To do so, we propose a novel reward function that incorporates map information gained in the training stage, increasing the agent's capacity to deliberate about the best course of action. Also, we use the SAC algorithm for training our ANN, which shows to be more effective than others in the state-of-the-art literature. A set of sim-to-sim and sim-to-real experiments illustrate that our proposed reward combined with the SAC outperforms the compared methods in terms of local minima and collision avoidance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge