On Principal Components Regression, Random Projections, and Column Subsampling

Paper and Code

Oct 08, 2017

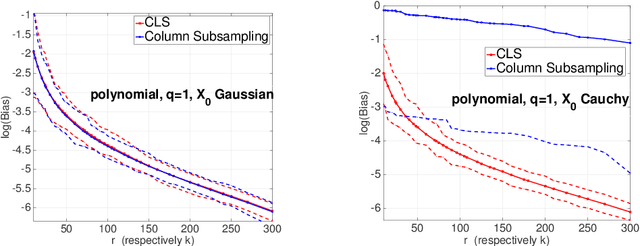

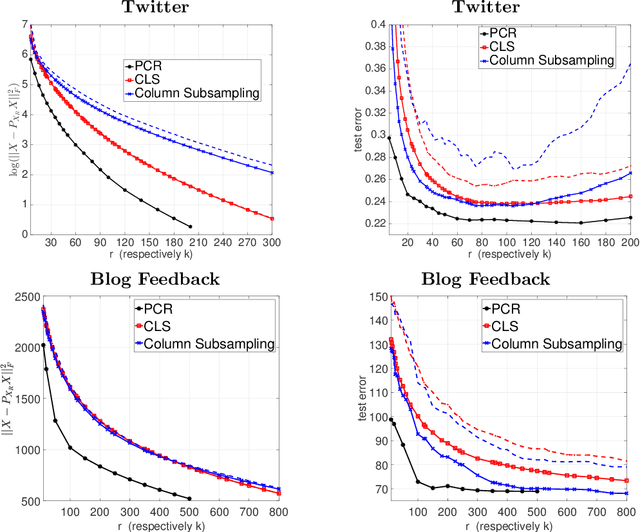

Principal Components Regression (PCR) is a traditional tool for dimension reduction in linear regression that has been both criticized and defended. One concern about PCR is that obtaining the leading principal components tends to be computationally demanding for large data sets. While random projections do not possess the optimality properties of the leading principal subspace, they are computationally appealing and hence have become increasingly popular in recent years. In this paper, we present an analysis showing that for random projections satisfying a Johnson-Lindenstrauss embedding property, the prediction error in subsequent regression is close to that of PCR, at the expense of requiring a slightly large number of random projections than principal components. Column sub-sampling constitutes an even cheaper way of randomized dimension reduction outside the class of Johnson-Lindenstrauss transforms. We provide numerical results based on synthetic and real data as well as basic theory revealing differences and commonalities in terms of statistical performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge