On Evaluating the Quality of Rule-Based Classification Systems

Paper and Code

Apr 06, 2020

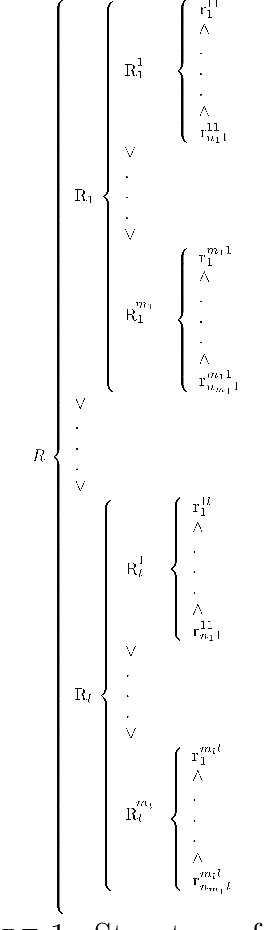

Two indicators are classically used to evaluate the quality of rule-based classification systems: predictive accuracy, i.e. the system's ability to successfully reproduce learning data and coverage, i.e. the proportion of possible cases for which the logical rules constituting the system apply. In this work, we claim that these two indicators may be insufficient, and additional measures of quality may need to be developed. We theoretically show that classification systems presenting "good" predictive accuracy and coverage can, nonetheless, be trivially improved and illustrate this proposition with examples.

* ICIC Express Letters ICIC International c 2013 ISSN 1881-803X ICIC

Express Letters Volume 11, Number 10, October 2017 c 2013 ISSN 1881-803X

Volume 11, Number 10, October 2017 * ICIC Express Letters Volume 11, Number 10, October 2017

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge